Quick Insight

Outsourced hosting support services allow hosting providers to scale infrastructure and technical resolution capabilities by integrating specialized engineering teams into their operational workflow. The proven 2026 model utilizes a hybrid approach, combining automated real time server monitoring tools 2026 with senior-level architectural oversight to ensure 99.9% uptime. By leveraging white label server support, businesses eliminate the overhead of in-house L3 engineers while maintaining high-authority technical delivery under their own brand.

Technical leaders must prioritize these core elements when evaluating the 2026 scaling model for outsourced hosting support services. First, implement a “Zero-Trust” architecture across all managed server support services to mitigate evolving edge-case vulnerabilities. Second, utilize server health monitoring tools and techniques that provide predictive analysis rather than reactive alerts. Third, ensure your outsourced server management company provides a transparent escalation path for kernel-level debugging and cloud infrastructure management services. Finally, adopt server security best practices 2026 to automate the mitigation of Layer 7 resource-exhaustion attacks.

Defining Production Uptime Through Specialized Engineering

Production uptime in 2026 depends on more than just hardware redundancy; it requires deep protocol-level expertise to manage complex service interdependencies. When a hosting provider fails to resolve a critical error like “ECONNREFUSED” or “MLSD failures,” the impact ripples through the entire client ecosystem, leading to immediate churn. Outsourced hosting support services bridge the gap between basic ticket handling and lead technical architecture. Our model focuses on identifying the root cause of failures within the network stack or filesystem rather than applying temporary “reboot” fixes that ignore underlying instability.

Understanding the 2026 Scaling Model for Infrastructure

The current landscape of cloud infrastructure management services demands a shift from manual intervention to high-density automation. Scalability fails when the ratio of engineers to servers remains linear; instead, the 2026 model uses real time server monitoring tools 2026 to manage clusters of thousands of nodes with a lean, expert team. This approach relies on linux server management services that utilize eBPF-based monitoring to catch micro-bursts in CPU or I/O wait before they trigger a website down troubleshooting steps protocol. Effective scaling requires an outsourced server management company that understands how to optimize containerized workloads and legacy monolithic stacks simultaneously.

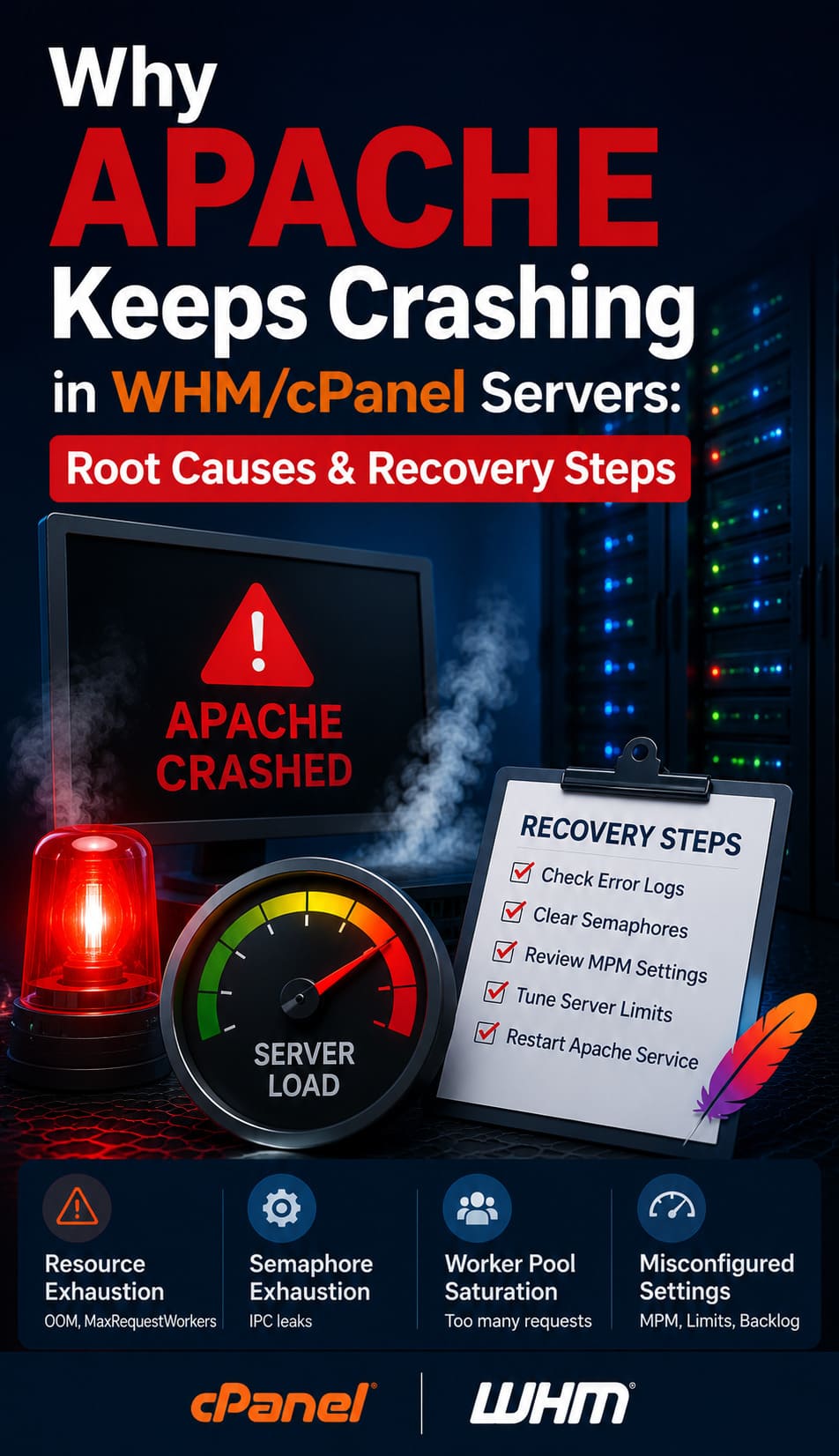

Analyzing Common Protocol Failures: Why Connections Refuse

A primary reason for “ECONNREFUSED” errors in production environments is the exhaustion of the TCP backlog or a kernel-level restriction on concurrent connections. When the apache server high cpu usage fix is ignored, the server stops accepting new SYN packets, causing a total service lockout. Engineers must examine dmesg or /var/log/messages to identify if the net.core.somaxconn limit is being hit. Engineers use 24/7 server management services to tune kernel parameters proactively, preventing protocol-level rejections that frustrate end users and trigger emergency support tickets.

Diagnosis of MLSD Failures in FTP Environments

“MLSD failures” often occur during the directory listing phase of an FTP session, typically due to a mismatch in the data channel establishment. This failure stems from the firewall blocking the high-numbered ports required for Passive Mode traffic. When an engineer uses nmap -p 21 [IP] and sees the port is open, but the client still times out, the issue lies in the passive port range negotiation. Proper cpanel server management involves explicitly defining the PassivePortRange in the FTP configuration and synchronizing those ports with the server hardening firewall rules to allow stateful packet inspection to pass the data.

Identifying the Root Cause of Slow Server Performance

Many administrators ask why server is slow after some time, usually pointing to a slow memory leak or a build-up of zombie processes. In a production environment, this often results from a database engine that has exhausted its buffer pool or a PHP-FPM pool that lacks a recycling limit. Engineers must use top, htop, or vmstat to verify if the CPU is trapped in “I/O Wait” or if the system is “swapping” to disk. Our managed server support services include a scheduled audit of slow-query logs and process execution times to eliminate these bottlenecks before they degrade the user experience.

Technical Walkthrough: Resolving Connectivity in FileZilla

When troubleshooting FileZilla errors at Level 3 debug status, engineers often find “Critical Error: Could not connect to server” due to TLS version mismatches. To fix this, access the FileZilla Site Manager and ensure the “Encryption” is set to “Require explicit FTP over TLS” only if the server supports it. If the server uses a self-signed certificate, the client must explicitly trust the fingerprint in the trusted.certs store. By providing remote server management services, architects can verify that the server’s OpenSSL libraries are updated to support the latest ciphers, ensuring a secure and successful handshake for every session.

Technical Walkthrough: WinSCP and Passive Mode Configuration

WinSCP users frequently encounter “Connection timed out” errors when the client expects an Active Mode connection, but the server is behind a NAT or firewall. To resolve this, navigate to the Advanced Site Settings under “Connection” and ensure “Passive Mode” is checked. This forces the client to initiate the data connection, which is significantly more reliable in cloud infrastructure management services environments. Senior engineers often automate these settings via pre-configured .ini files for enterprise teams to maintain consistency across the entire outsourced hosting support services workflow.

Architecture Insight: Passive vs. Active Mode Mechanics

In Active Mode FTP, the client tells the server which port it is listening on, and the server initiates the data connection back to the client. This frequently fails because modern client-side firewalls block unsolicited incoming connections. Passive Mode reverses this: the server tells the client which port to connect to for data. From an infrastructure perspective, Passive Mode is superior for linux server management services because it allows the server-side firewall to manage a specific, controlled port range while maintaining compatibility with client-side security measures.

Real-World Use Case: The CSF Firewall Port Conflict

A common scenario involving a cPanel server management audit involves the ConfigServer Security & Firewall (CSF) blocking passive ports. On a production server, if the PassivePortRange in /etc/pure-ftpd.conf is set to 30000 35000, but the TCP_IN field in /etc/csf/csf.conf only allows port 21, all directory listings will fail. Resolving this requires adding the entire range to the TCP_IN string and restarting the firewall with csf -r. This is a fundamental step in cpanel security hardening guide implementation that prevents massive support ticket spikes from users unable to upload website content.

Moving Toward SFTP and SSH Key Authentication

FTP, even with TLS, remains a legacy protocol with inherent complexities; therefore, server security best practices 2026 mandate a transition to SFTP (SSH File Transfer Protocol). Unlike FTP, SFTP operates over a single port (default 22) and handles both commands and data in a single encrypted stream. Implementing SSH Key authentication instead of passwords eliminates 99% of brute-force risks. As part of a robust server hardening strategy, engineers should disable password-based SSH logins and enforce the use of RSA or Ed25519 keys for all remote server management services.

How to Fix MySQL Too Many Connections Error

The “Too many connections” error occurs when the max_connections limit in my.cnf is reached, often caused by unoptimized queries that keep connections open in a “Sleep” state. To fix this instantly, log into the MySQL shell and run SET GLOBAL max_connections = 500;. However, the long-term resolution involves identifying slow queries and ensuring the application uses persistent connection pooling. Professional aws server management services often involve offloading the database to RDS or tuning the internal InnoDB buffer pool to prevent Apache worker threads from piling up and crashing the service.

Resolving Disk Space 100% Errors on Linux

A server with a full filesystem will experience immediate service failures, including the inability to write session data or logs. To how to fix disk space 100% linux server issues, engineers should first identify large files using du -sh /* | sort -h. Often, the culprit is an unrotated log file in /var/log or a massive error log in a user’s public_html. Clearing the space is only the first step; the permanent fix involves configuring logrotate and setting up alerts via server monitoring services 24/7 to notify the team when a partition reaches 80% capacity.

Preventing Brute Force Attacks in cPanel Environments

Brute-force attacks on wp-login.php or SSH ports consume significant CPU and bandwidth. To how to prevent brute force attacks in cpanel, administrators should deploy cPHulk and configure it to block IPs after three failed attempts. Furthermore, implementing a Web Application Firewall (WAF) like ModSecurity provides a layer of protection that inspects the intent of the traffic. Server hardening also includes changing the default SSH port and using managed server support services to monitor for unusual login patterns across the entire server fleet.

Real Time Server Monitoring Tools and Techniques

In 2026, real time server monitoring tools 2026 like Prometheus, Netdata, and Zabbix provide granular visibility into every kernel metric. These tools allow engineers to visualize context switching, interrupt requests, and disk latency in real-time. By utilizing these server health monitoring tools and techniques, an outsourced hosting support services provider can spot a failing disk or a DDoS attack before it impacts the client’s uptime. This data-driven approach is what separates high-authority technical architects from standard support technicians.

Cloud Infrastructure Monitoring Best Practices

Effective cloud infrastructure monitoring best practices involve a multi-layered approach: monitoring the host, the guest OS, and the application layer. For aws server management services, this means integrating CloudWatch alerts with local OS-level agents. You must monitor for “Steal Time” in virtualized environments, as high steal time indicates that other tenants on the physical host are consuming your CPU cycles. White label server support teams use this data to recommend instance migrations or upgrades, ensuring the client’s infrastructure always aligns with their performance requirements.

Senior Engineer Toolkit: Essential Commands

Architects must be proficient with a specific set of tools for rapid resolution. Use tcpdump -i eth0 port 21 to capture and analyze FTP handshakes in real-time. Execute firewall-cmd --list-all to verify active zones and allowed ports on RHEL-based systems. Run netstat -tulpn to identify which processes are listening on specific ports. These commands are the backbone of remote server management services, allowing for the precise diagnosis of connectivity issues that automated tools might misinterpret.

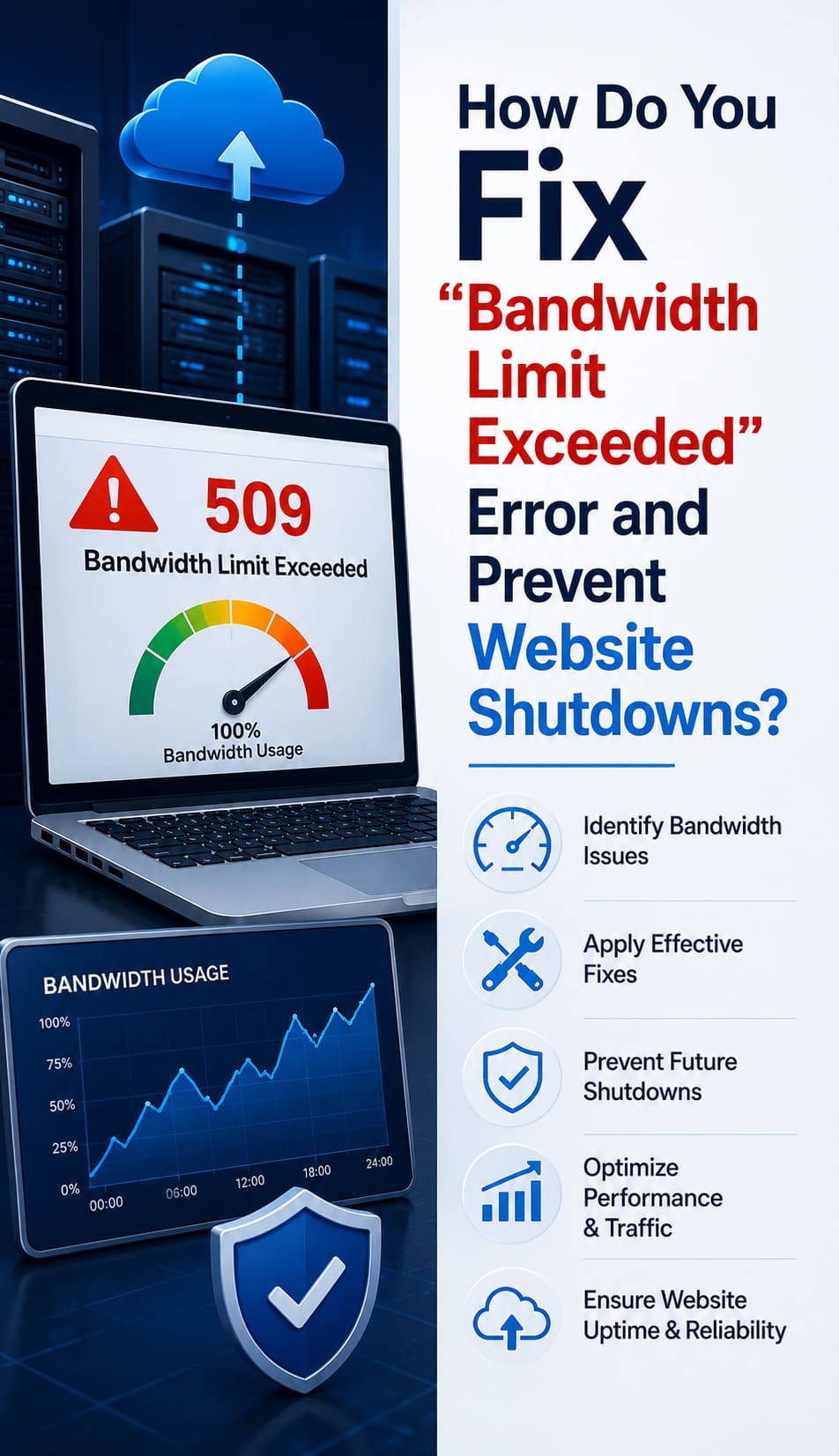

Why Businesses Need Server Monitoring

Many organizations ask why businesses need server monitoring, and the answer is rooted in financial risk mitigation. A single hour of downtime can cost thousands in lost revenue and lead to permanent damage to SEO rankings. Server monitoring services 24/7 provide the early warning system needed to perform “hot fixes” before a service crashes. Additionally, monitoring data provides the historical context needed for capacity planning, ensuring that you only pay for the cloud infrastructure management services resources you actually use.

Benefits of Outsourcing Technical Support

The primary benefits of outsourcing technical support include 24/7 global coverage, access to high-level expertise, and significant cost savings. Hosting companies can offer managed server support services without the burden of managing an internal graveyard shift or paying for ongoing L3 training. An outsourced server management company acts as an extension of your team, providing the “Senior Infrastructure Engineer” perspective on every ticket. This partnership allows you to scale your business while your technical infrastructure remains in expert hands.

Optimizing Your Hosting Infrastructure for 2026?

Don’t let ECONNREFUSED errors or MLSD failures disrupt your business. Leverage our proven 2026 scaling model for outsourced hosting support services and ensure 99.9% uptime with specialized L3 engineering.

- Proactive 24/7 Real-Time Server Monitoring

- Advanced cPanel & Cloud Security Hardening

- Expert Root Cause Analysis & Kernel-Level Debugging

Conclusion

Engineers build the proven 2026 scaling model for outsourced hosting support services on a foundation of technical excellence, proactive monitoring, and architectural foresight. By eliminating the “fluff” of traditional support and focusing on protocol-level root cause analysis, hosting providers can guarantee the stability their clients demand. Whether you are troubleshooting an apache server high cpu usage fix or implementing a cpanel security hardening guide, the integration of senior-level engineering is the only way to secure a #1 position in the competitive hosting market.