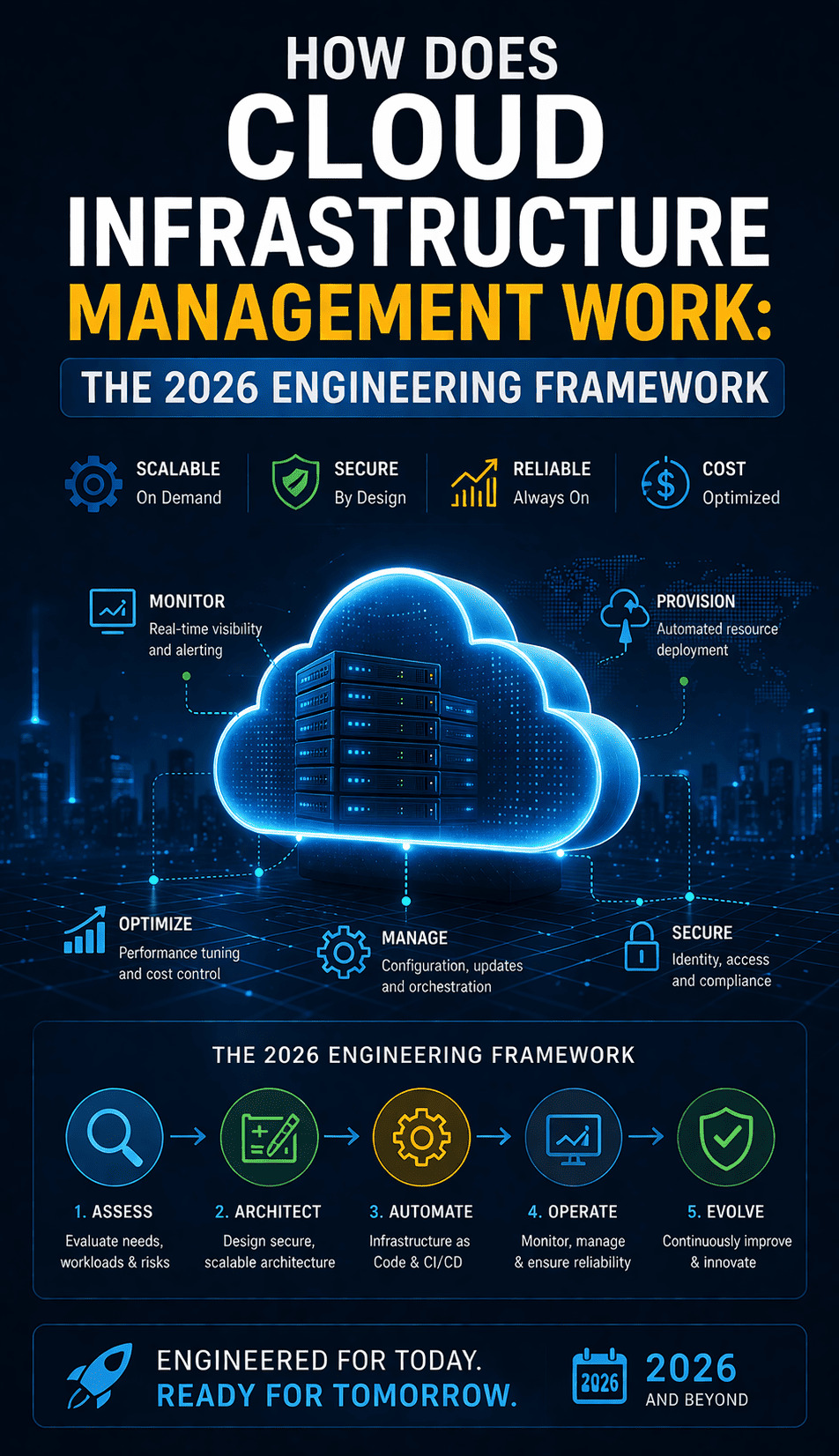

Cloud infrastructure management in 2026 is the strategic process of overseeing and optimizing a company’s digital assets including virtual servers, storage, networking, and cloud-native services to ensure maximum uptime, security, and cost-efficiency. It matters because, in a decentralized digital economy, poorly managed infrastructure leads to catastrophic data breaches, uncontrolled billing spikes, and performance bottlenecks that alienate users. Effective management transitions IT from a reactive “firefighting” cost center to a proactive driver of business innovation.

Understanding Cloud Infrastructure Management in the Modern Era

Cloud infrastructure management is the operational heartbeat of any modern digital enterprise. It involves the end-to-end administration of virtualized resources across public, private, or hybrid environments. In 2026, this discipline has evolved beyond simple server maintenance into a complex orchestration of microservices, serverless functions, and automated security protocols. Engineers today don’t just “fix servers”; they manage the code that defines the entire environment, ensuring that the underlying hardware abstraction layer remains invisible and frictionless for the end-user.

The core objective is to balance the “Iron Triangle” of cloud computing: performance, security, and cost. If you push for maximum performance without management, your costs will skyrocket. If you focus solely on cost-cutting, your uptime and security posture will degrade. A senior infrastructure engineer uses a combination of cloud monitoring services and automated governance to ensure these three variables stay in perfect equilibrium, regardless of traffic volatility or global scale.

Why Businesses Face Infrastructure Failure

Despite the “infinite” scalability promised by providers like AWS, Azure, and Google Cloud, businesses frequently face critical infrastructure issues. These failures rarely stem from the cloud provider’s physical hardware. Instead, they are almost always the result of architectural oversights or “configuration drift.” As systems grow, they become more complex; a minor change in a security group or a misconfigured load balancer can trigger a cascading failure that takes down an entire region’s worth of services.

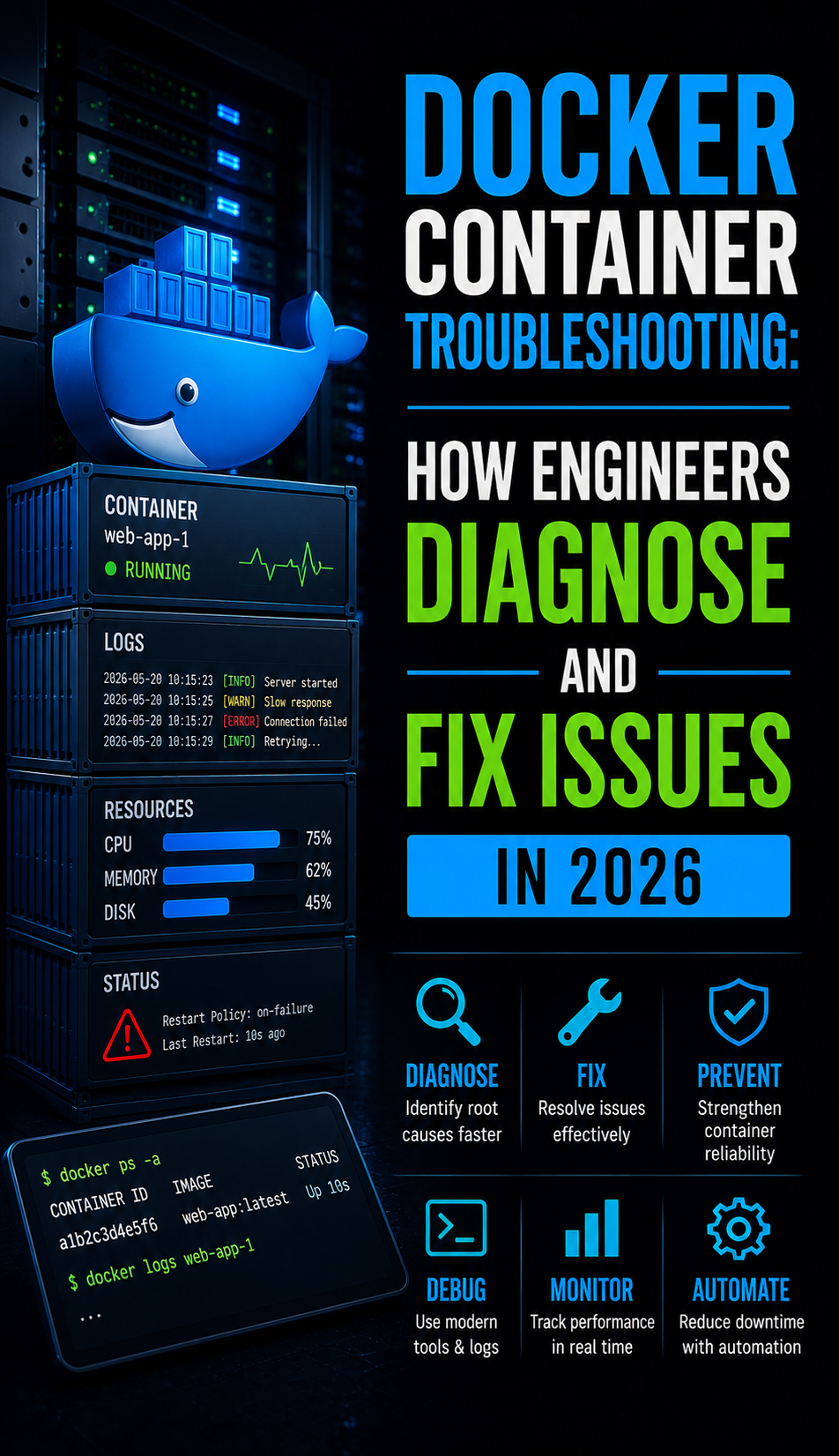

Another primary driver of failure is the lack of “observability.” Many organizations have basic monitoring they know when a server is “up” or “down” but they lack the deep telemetry needed to identify a memory leak or a “noisy neighbor” on a shared host. Without 24/7 NOC services and deep-packet inspection, these subtle issues grow into full-blown outages. Businesses also struggle with rapid change; in DevOps environments, teams deploy code dozens of times daily, and without rigorous automated testing, each deployment introduces potential failure risks.

The Root Causes: Why the Cloud Breaks

The root causes of cloud instability in 2026 generally fall into three categories: misconfigurations, scaling lag, and monitoring gaps. Misconfigurations are often the most dangerous because they are silent. A developer might open a port for testing and forget to close it, or an S3 bucket might be left publicly readable. These aren’t technical “bugs” in the software, but human errors in the management of the infrastructure that lead to massive security vulnerabilities.

Scaling issues occur when the “auto” in auto-scaling isn’t tuned correctly. For example, if a “Step Scaling” policy is set to add instances only when CPU hits 90%, the new instances might take three minutes to boot up and pass health checks. By that time, the existing instances have already crashed under the load. Monitoring gaps are the final piece of the puzzle; if your monitoring tools are only polling every five minutes, a “micro-burst” of traffic can take you offline and disappear before the next poll, leaving your engineers guessing at the cause of the downtime.

How Senior Engineers Optimize Cloud Infrastructure

Engineers solve these issues through a process called “Infrastructure as Code” (IaC). Instead of manually clicking buttons in a web console, they write scripts (using Terraform or Pulumi) that define the exact state of the network. This ensures that every environment development, staging, and production is identical, eliminating the “it works on my machine” problem. When an engineer needs to scale, they don’t add a server; they update a variable in a configuration file, and the system handles the deployment automatically.

The debugging approach used by experts is highly methodical. When a CPU spike occurs, an engineer doesn’t just restart the service. They use tools like top, htop, or cloud-native profilers to identify the specific process consuming cycles. If it’s a memory leak, they look at the heap dump to find the unreferenced objects. Only after the root cause is identified and a “Post-Mortem” is conducted do they implement a permanent fix. This level of managed cloud support ensures that the same issue never happens twice.

Real-World Production Scenarios: Traffic and Cost

Consider a global e-commerce platform during a flash sale. Traffic jumps from 1,000 to 100,000 concurrent users in seconds. Without DevOps infrastructure management, the database would likely lock up as it hits its maximum connection limit. A senior engineer manages this by implementing “Read Replicas” and “Connection Pooling.” They offload the heavy read traffic to secondary database nodes, keeping the primary node free for processing transactions.

In another scenario, a startup might see their cloud bill double overnight. Upon investigation, the engineer finds that a “developer” instance was launched using a high-memory, GPU-optimized machine type that was never shut down. To fix this permanently, the engineer implements “Cloud Governance” policies that automatically terminate un-tagged resources or shut down non-production environments outside of business hours. This type of cost optimization is a core component of outsourced hosting support in 2026.

The Arsenal: Monitoring Tools for 2026

The toolkit for an infrastructure engineer has become highly specialized. While Nagios and Zabbix are still used for traditional server monitoring and maintenance, modern cloud environments demand “Full-Stack Observability.” Tools like Prometheus and Grafana have become the industry standard for gathering and visualizing time-series metrics. They allow engineers to see exactly how a change in code affects memory usage or network latency in real-time.

For those on AWS, CloudWatch provides deep integration into the underlying hardware, offering logs, metrics, and alarms in a single pane of glass. However, many enterprises now opt for third-party tools like Datadog or New Relic, which provide “AI-powered” insights. These tools can automatically correlate a spike in error rates with a specific code deployment or a latent API response from a third-party vendor. This rapid identification is essential for maintaining high-availability Linux server management services.

The Impact: Performance, Scalability, and Security

When cloud infrastructure is managed correctly, the impact on the business is transformative. Performance becomes a competitive advantage; pages load instantly, and applications feel “snappy” even on low-bandwidth connections. This is achieved through Content Delivery Networks (CDNs) and “Edge Computing,” which cache data closer to the user, reducing the distance signals have to travel across the internet.

Scalability becomes “elastic,” meaning the infrastructure grows and shrinks exactly in line with demand. This ensures you never pay for idle capacity while never losing a customer due to a “Server Busy” error. Security is no longer an afterthought but is “baked in” to the infrastructure. With “Zero Trust” architectures, every service must verify its identity before communicating with another, making it nearly impossible for an attacker to move laterally through your network even if they gain an initial foothold.

Best Practices: The Engineer’s Handbook

The first rule of 2026 infrastructure is: “Automate everything.” If a task has to be done more than twice, it should be a script. This reduces human error and ensures consistency. The second rule is “Immutable Infrastructure.” Instead of patching a running server, engineers deploy a new, updated version of the server and destroy the old one. This prevents “configuration drift” where servers that started identical become different over time due to manual updates.

Another best practice is “Chaos Engineering.” Senior teams deliberately break things in production like shutting down a random server or inducing network latency to prove that the system can recover automatically. If your infrastructure can’t survive a random failure on a Tuesday afternoon, it won’t survive a real disaster during a peak traffic window. This mindset is what separates standard managed cloud support from world-class infrastructure engineering.

Multi-Cloud vs. Single Cloud: The Great Debate

In 2026, the debate between single and multi-cloud strategies has reached a fever pitch. A single-cloud strategy (e.g., all-in on AWS) allows for deep integration and lower operational complexity. Your team only needs to master one set of tools. However, it creates “Vendor Lock-in.” If the provider raises prices or suffers a major regional outage, your business is at their mercy.

Multi-cloud involves spreading your workloads across two or more providers (e.g., AWS and Azure). This provides the ultimate level of redundancy and allows you to “cherry-pick” the best services from each. You might use Google Cloud for its AI/ML capabilities while using AWS for its robust storage solutions. The downside is massive complexity; your engineers must be experts in multiple platforms, and data transfer costs between clouds can become a significant expense. Most senior engineers now recommend a “Primary Cloud” with a “Secondary Cloud” for critical backup and disaster recovery.

Case Study: Scaling for the Global Stage

A mid-sized Fintech company was struggling with 15-minute downtimes every time they pushed a new feature to their mobile app. Their “legacy” cloud setup used manual deployments and a single large database. We transitioned them to a DevOps infrastructure management model using Kubernetes and a Microservices architecture.

By breaking the application into smaller pieces, we could update the “Login” service without touching the “Transaction” service. We implemented blue-green deployments, tested the new version in a parallel environment, and then switched traffic instantly. The result? Deployment time dropped from 40 minutes to 4 minutes, and their uptime increased to 99.995%. Most importantly, their cloud spend dropped by 22% because they were no longer over-provisioning “just in case” of a crash.

Summary:

To succeed with cloud infrastructure management in 2026, businesses must embrace automation, prioritize observability, and treat infrastructure as code. The goal is to create a “self-healing” environment that detects its own failures and scales its own resources without human intervention. By following the best practices of senior engineers—such as immutable deployments and chaos testing—companies can ensure their digital foundations are strong enough to support the AI-driven demands of the future.

Struggling with Traffic Spikes and Downtime?

Partner with our experts for reliable cloud auto-scaling, proactive monitoring, and high-availability infrastructure solutions.

Conclusion

The landscape of cloud infrastructure management in 2026 is demanding, however, it is rewarding. The shift from manual server management to automated, code-driven environments allows small teams to manage global networks efficiently. Whether you choose outsourced hosting support or build an in-house DevOps team, focus on three pillars: performance, security, and cost. The cloud is powerful, but it requires expert management to run at peak efficiency.