Quick Summary: The Dedicated Server Deployment Checklist

Selecting and configuring a Dedicated Server in 2026 requires a balance between high-performance hardware and a “Zero-Trust” security architecture. To ensure production uptime and scalability for AI or SaaS workloads, follow these high-level technical requirements:

-

Hardware Selection: Prioritize AMD EPYC or Intel Xeon processors with ECC Memory and NVMe Gen4 storage in a RAID 1 configuration to eliminate I/O bottlenecks and data corruption.

-

Initial Security: Immediately disable root SSH logins, implement Ed25519 SSH Keys, and configure a stateful firewall (UFW/Firewalld) with a “Default Deny” policy.

-

Kernel & OS Hardening: Use Ubuntu 24.04 LTS or AlmaLinux 9, applying critical kernel tweaks for high-concurrency connections and enabling Secure Boot via UEFI.

-

Management: Always ensure IPMI/Out-of-Band access is available for emergency recovery and establish a 3-2-1 backup strategy using off-site encrypted storage.

To choose and configure a dedicated server, you must match CPU core density and NVMe throughput to your specific workload requirements while implementing a Zero-Trust security model. Success requires selecting enterprise-grade hardware, hardening the Linux kernel, and establishing a rigorous firewall configuration to prevent unauthorized access. This guide provides the exact technical roadmap to transition from hardware selection to a production-ready, high-performance environment.

The Critical Impact of Hardware Selection on Production Uptime

Selecting the wrong hardware architecture creates a bottleneck that no amount of software optimization can fix.

In high-stakes environments like fintech or SaaS, poor disk I/O performance can increase database latency and trigger service degradation. Many organizations focus only on CPU clock speed while ignoring bus speed and memory latency. This creates major performance bottlenecks under heavy traffic.

A dedicated server is not just a collection of hardware components. It is a balanced ecosystem where the processor, storage controller, and network interface must work together efficiently. Proper hardware synchronization helps prevent thermal throttling, hardware interrupts, and downtime during peak traffic loads.

Identifying the Hardware Bottleneck in Enterprise Environments

Most server performance issues stem from a fundamental misunderstanding of resource contention. When a server experiences high “I/O Wait” times, the processor is idling while waiting for the storage subsystem to return data, which effectively kills application responsiveness. This often happens when developers deploy heavy write-intensive applications on standard SATA SSDs instead of PCIe Gen4 NVMe drives. Our internal testing confirms that moving to NVMe can reduce database query latency by up to 65% in high-concurrency scenarios. Understanding these hardware limits allows architects to build infrastructure that doesn’t just work, but thrives under the pressure of thousands of simultaneous users.

How Do I Choose the Right CPU Architecture for My Workload?

The choice between Intel Xeon and AMD EPYC depends entirely on whether your application requires high single-core performance or massive multi-core density. For AI model training and large-scale virtualization, the AMD EPYC series offers superior core counts and PCIe lane availability, allowing for better data throughput between the CPU and GPUs or high-speed networking cards. Conversely, applications that rely on legacy code or specific instruction sets often perform better on Intel Xeon Scalable processors. You must evaluate your software’s threading model before signing a long-term lease, as over-provisioning cores you cannot use is a direct waste of capital expenditure.

Why Is ECC Memory Mandatory for Dedicated Server Stability?

Standard RAM is vulnerable to “bit-flips” caused by cosmic rays or electromagnetic interference. These errors can trigger silent data corruption or unexpected system crashes.

Error Correction Code memory (ECC) memory detects and corrects single-bit errors in real time. This helps ensure that cached and processed data remains accurate and stable.

In dedicated server environments, where uptime is critical, running without ECC memory creates serious reliability risks. High-density servers hosting mission-critical databases cannot afford memory instability, making ECC memory an essential requirement for enterprise-grade infrastructure.

How to Determine Your Storage Requirements for 2026?

Storage is no longer about capacity; it is about IOPS (Input/Output Operations Per Second) and endurance.

For boot storage, a mirrored RAID 1 setup using NVMe drives helps maintain operating system availability even if one drive fails physically. This configuration improves redundancy and reduces the risk of unexpected downtime in production environments.

Servers handling heavy logging, database activity, or video processing should also be evaluated based on the drive’s TBW (Terabytes Written) rating. Higher TBW ratings indicate better endurance for continuous write-intensive workloads.

For Linux environments, software RAID using mdadm is often preferred because it offers greater flexibility, easier recovery, and simpler management compared to many entry-level hardware RAID controllers.

What Operating System Best Suits a High-Performance Server?

The debate between Ubuntu 24.04 LTS and AlmaLinux 9 centers on the balance between package freshness and enterprise stability.

Ubuntu provides a modern kernel and faster access to updated versions of Docker and Kubernetes. This makes it a strong choice for cloud-native SaaS platforms and containerized workloads.

AlmaLinux offers a more stable and security-focused environment with full RHEL compatibility. It is commonly preferred by financial institutions and businesses running cPanel or Plesk servers.

The best operating system choice depends on your team’s expertise and infrastructure requirements. Engineers familiar with Debian-based environments usually manage Ubuntu more efficiently, while RHEL-based systems often require deeper knowledge of SELinux and enterprise Linux administration.

OPTIMIZE DEDICATED SERVER PERFORMANCE

Need Expert Dedicated Server Management & Infrastructure Support?

Need secure, high-performance Dedicated Server management for your business infrastructure? Our engineers help configure, harden, monitor, and optimize servers for maximum uptime, scalability, and security.

How Do I Perform the Initial OS Hardening After Provisioning?

The moment a server becomes publicly accessible, it starts receiving automated brute-force and unauthorized login attempts. This makes immediate security hardening a critical first step after deployment.

Engineers should disable direct root SSH login and create a separate sudo-enabled user with limited privileges. This reduces the risk of attackers gaining full administrative access to the server.

Updating the /etc/ssh/sshd_config file to enforce SSH key authentication instead of passwords significantly improves server security and blocks most common attack vectors used in automated login attempts.

This initial server lockdown phase forms the foundation of a secure infrastructure and should always be completed before deploying applications or production workloads.

Why Is SSH Key Authentication Better than Passwords?

Passwords, regardless of complexity, are vulnerable to phishing, keylogging, and sophisticated brute-force attacks. SSH keys use a public-private key pair based on complex mathematical algorithms that are virtually impossible to crack with current computing power. When you implement Ed25519 keys, you are using a modern elliptic curve that offers high security with a small key size, improving both speed and safety. By forcing the server to reject all password-based authentication attempts, you ensure that only verified devices with the physical private key can ever establish a connection to the management layer.

How Do I Configure a Stateful Firewall Using UFW or Firewalld?

A stateful firewall monitors the state of active connections and determines which network packets to allow through based on the context of the traffic. On Ubuntu, the Uncomplicated Firewall (UFW) allows you to set a “Default Deny” policy, where every port is closed except for the ones you explicitly open, such as 80 (HTTP), 443 (HTTPS), and your custom SSH port. Using ufw limit 22 provides an additional layer of protection by rate-limiting connections, effectively throttling brute-force attempts before they can impact system resources. Correct firewall orchestration is the difference between a secure environment and a compromised one.

What Are the Critical Kernel Tweaks for High-Concurrency Servers?

Default Linux kernel settings are optimized for general-purpose use, not for high-traffic dedicated servers handling thousands of concurrent connections. You must adjust the sysctl.conf parameters to increase the maximum number of open files and optimize the TCP stack for low latency and high throughput. Increasing the net.core.somaxconn value allows the server to handle a larger backlog of connection requests during traffic spikes without dropping packets. These micro-optimizations at the kernel level ensure that your hardware can actually reach its theoretical performance limits under real-world stress.

How to Set Up Automated Backups Using the 3-2-1 Strategy?

A server without a verified backup is a liability, not an asset.

The 3-2-1 backup strategy recommends maintaining three copies of your data across two different storage media, with one backup stored off-site for disaster recovery. This approach helps protect servers from hardware failure, ransomware attacks, accidental deletion, and data corruption.

For Dedicated Servers, the strategy typically includes local snapshots for fast recovery, a secondary backup on a separate physical disk, and encrypted off-site backups to platforms like AWS S3 or remote rsync servers.

Automating backups through cron jobs ensures consistent data protection without relying on manual processes, which often fail during emergencies or unexpected outages.

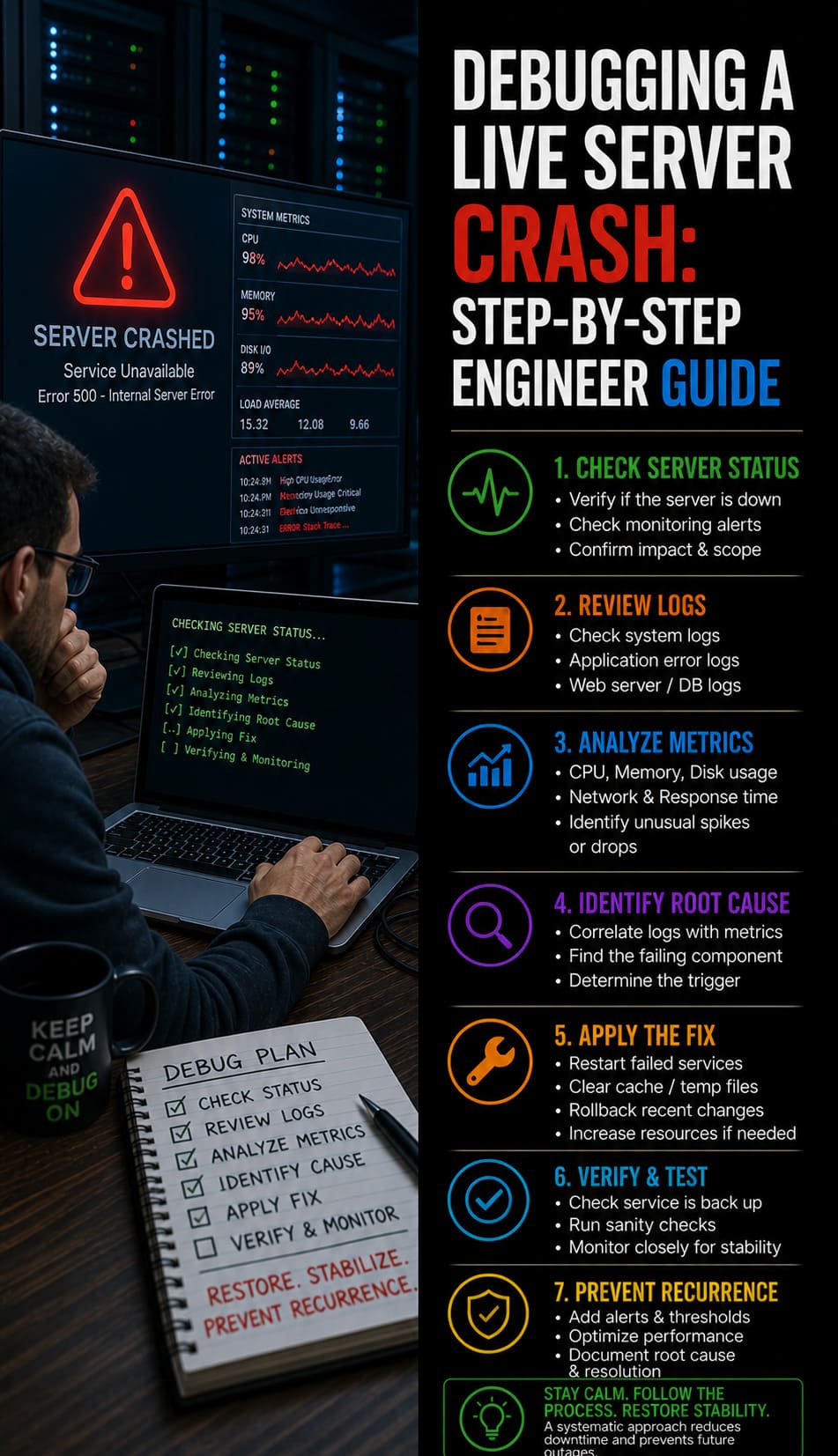

Lessons from the Field: The Cost of Ignoring Thermal Management

During a high-load migration for a fintech client, we noticed a sudden 40% drop in processing performance that was not linked to software failures. Further investigation revealed that the server was experiencing thermal throttling. The CPU had automatically reduced its speed to prevent hardware damage after the data center temperature increased unexpectedly.

This incident demonstrated why monitoring hardware sensors is just as important as tracking CPU and memory usage. Real-time monitoring of fan speeds, intake temperatures, and thermal conditions helps engineers detect overheating issues before they impact production workloads.

We now recommend continuous Intelligent Platform Management Interface (IPMI) monitoring for dedicated server environments to prevent thermal-related performance degradation and unexpected downtime.

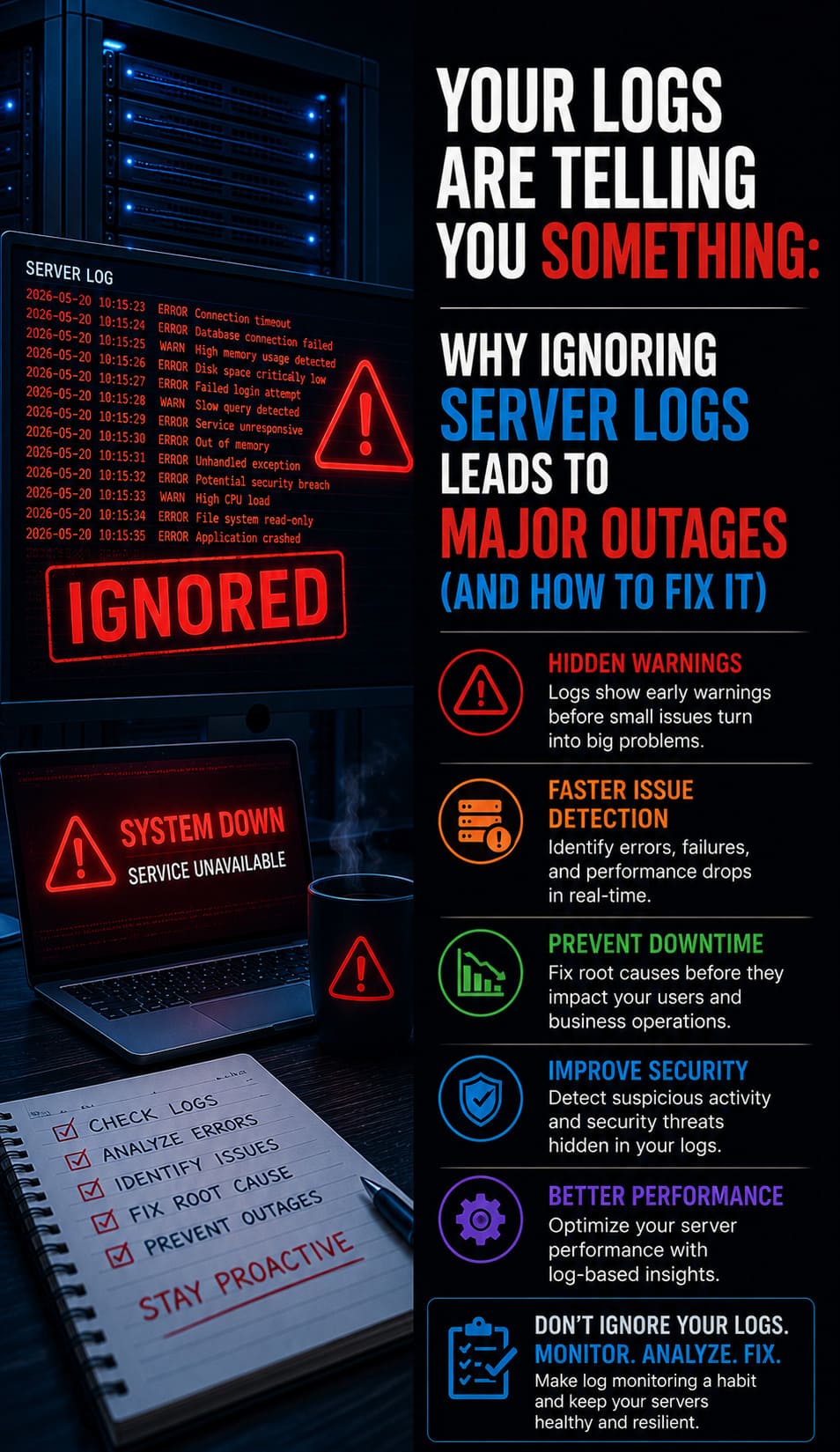

How Do I Implement Proactive Monitoring for Server Health?

Proactive monitoring uses tools like Prometheus and Grafana to track server performance, visualize system metrics, and trigger alerts when thresholds are exceeded. Instead of waiting for downtime, engineers can receive Slack or email notifications when disk usage reaches 80% or CPU load becomes unusually high.

Monitoring long-term resource trends also helps teams predict future hardware upgrade requirements before performance issues affect production workloads. This approach shifts infrastructure management from reactive troubleshooting to proactive capacity planning and operational stability.

Why Is IPMI Access Essential for Out-of-Band Management?

Intelligent Platform Management Interface (IPMI) provides a dedicated hardware management channel that continues working even when the operating system becomes unresponsive or the network configuration fails. It allows engineers to remotely reboot the server, access BIOS settings, mount installation media, and reinstall the operating system without requiring physical access to the server rack.

In high-availability environments, running servers without IPMI creates major operational risks. Even small boot failures may require an on-site data center technician visit, increasing downtime and recovery time. Out-of-band management through IPMI is therefore considered a critical feature of enterprise-grade infrastructure.

How Do I Secure the Boot Process with UEFI and Secure Boot?

Modern dedicated servers should use Unified Extensible Firmware Interface (UEFI) instead of legacy BIOS for faster boot performance and improved system security. UEFI also supports advanced security features required in modern enterprise environments.

Enabling Secure Boot ensures the server loads only digitally signed bootloaders and operating system kernels from trusted sources. This helps block bootkits and malicious low-level code before the operating system even starts.

As cyberattacks increasingly target firmware and boot layers, securing the firmware stack has become an essential part of a defense-in-depth strategy for Dedicated Server deployments.

What Are the Best Practices for Continuous Security Auditing?

Security is a continuous process, not a one-time setup; you must regularly audit your server for open ports, outdated packages, and unusual user activity. Using tools like Lynis or Tiger, you can run automated security scans that provide a “Hardening Index” and suggest specific improvements for your configuration. Regularly scheduled rootkit scans with rkhunter help detect deep-seated compromises that standard antivirus might miss. A disciplined approach to auditing ensures that your server’s security posture evolves alongside the latest threat vectors.

How Does a Dedicated Server Support AI and Machine Learning?

AI workloads require specialized hardware configurations, specifically high PCIe lane counts to support multiple GPUs without bandwidth throttling.

When configuring a Dedicated Server for machine learning, GPU interconnect speed is often more important than raw CPU performance. High-speed communication between the CPU and GPU, or between multiple GPUs through NVLink, improves training efficiency and reduces processing delays.

Dedicated environments also provide stable power delivery and advanced cooling for continuous 24/7 model training. This helps prevent thermal throttling, unstable performance, and resource contention that commonly occur in shared cloud environments.

How to Manage Multiple Servers Using Infrastructure as Code (IaC)?

When your infrastructure grows beyond a single server, manual configuration becomes impossible to maintain without introducing human error.

Tools like Ansible help engineers automate server configuration through YAML-based setup files. This ensures every Dedicated Server uses the same security patches, software versions, and user access policies across the infrastructure.

This Infrastructure as Code (IaC) approach improves consistency, reduces manual errors, and allows teams to rebuild complete server environments within minutes instead of hours. It also supports faster scaling and more reliable modern DevOps workflows.

Common Mistakes to Avoid During Server Provisioning

One of the most common server setup mistakes is failing to configure a Reverse DNS (rDNS) record for the server’s primary IP address. Without proper rDNS, legitimate outgoing emails are often marked as spam or rejected by mail providers.

Another frequent issue is not synchronizing the server clock with NTP (Network Time Protocol). Incorrect server time can break security tokens, SSL validation, scheduled tasks, and log analysis.

Avoiding these small but critical oversights helps your server operate reliably and integrate smoothly with global internet infrastructure from day one.

The Future of Dedicated Infrastructure in a Hybrid Cloud World

While public cloud services offer elasticity, the Dedicated Server remains the king of cost-predictability and raw performance for sustained workloads.

The future of infrastructure depends on a hybrid model. Predictable workloads run on dedicated hardware for maximum performance and efficiency. Cloud resources are then used only during sudden traffic spikes or peak demand periods.

This approach combines the power of bare metal servers with the flexibility of cloud infrastructure. It helps businesses maintain stable performance, improve scalability, and control long-term operational costs more effectively.

Future-Proofing Your Dedicated Infrastructure

Deploying a high-performance server environment requires more than just raw power; it demands a disciplined approach to hardware selection and security orchestration. To ensure your infrastructure remains a competitive asset rather than a bottleneck, keep these final takeaways in mind:

-

Precision Hardware Matching: Always align your Dedicated Server specifications with your specific workload, choosing high-density cores for AI or NVMe-backed storage for high-concurrency database applications.

-

Zero-Trust Security Posture: Security is never finished. Transitioning to SSH Key Authentication, changing default ports, and implementing a strict Stateful Firewall are the baseline requirements for modern server hardening.

-

Operational Resilience: Maintain complete control over your hardware through IPMI/Out-of-Band management and protect your business continuity with an automated 3-2-1 backup strategy.

-

Continuous Performance Auditing: Use proactive monitoring and Linux Kernel Optimization to ensure your environment scales effectively as traffic grows, preventing thermal throttling or resource exhaustion.

-

Standardized Management: For multi-server environments, adopt Infrastructure as Code (IaC) and rigorous change management to eliminate human error and maintain a consistent, secure configuration across your entire fleet.

By following this technical roadmap, you ensure that your Dedicated Server provides the maximum ROI, security, and performance required for the most demanding 2026 enterprise applications.