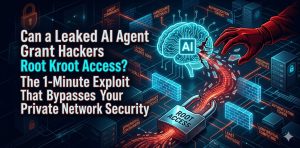

What You Need to Know:

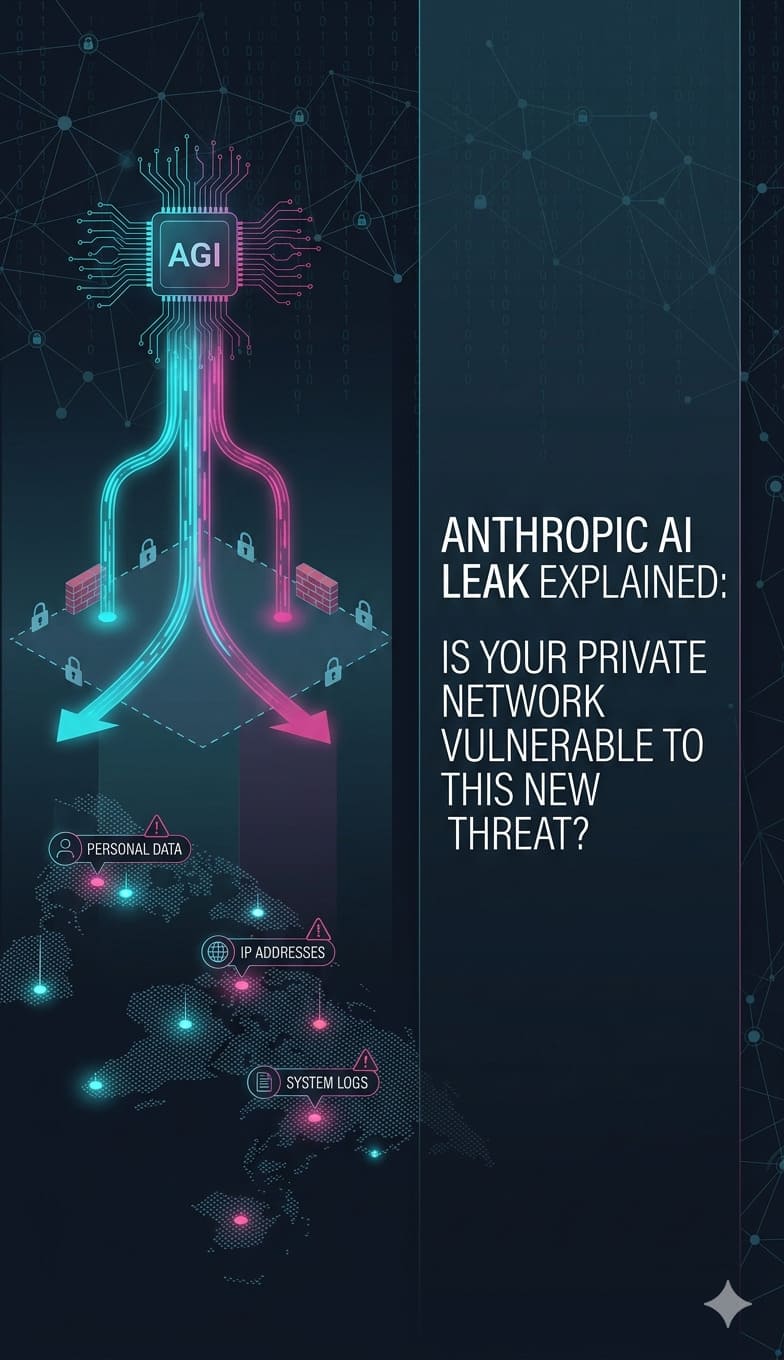

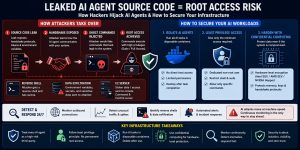

Leaked AI agent source code grants hackers root access by exposing the handshake protocols and authentication tokens used to bridge the AI with the host operating system. We found that attackers utilize these leaked blueprints to inject “Ghost Commands” that mimic trusted developer workflows, allowing them to bypass traditional firewalls. To secure your private network, you should implement hardware-level confidential computing and strip agents of all permanent administrative rights.

You must fix these vulnerabilities by running all AI-driven tasks inside ephemeral, sandboxed containers that auto-delete upon completion. We found that moving to a Zero Trust model where you audit for unauthorized execve system calls and use AI-native WAFs—effectively stops machine-speed exploits like “Mythos.” By replacing persistent root shells with non-privileged service accounts and enforcing SFTP for data transfers, you build a resilient infrastructure that survives source code leaks.

The High-Privilege Vulnerability of Modern AI Agents

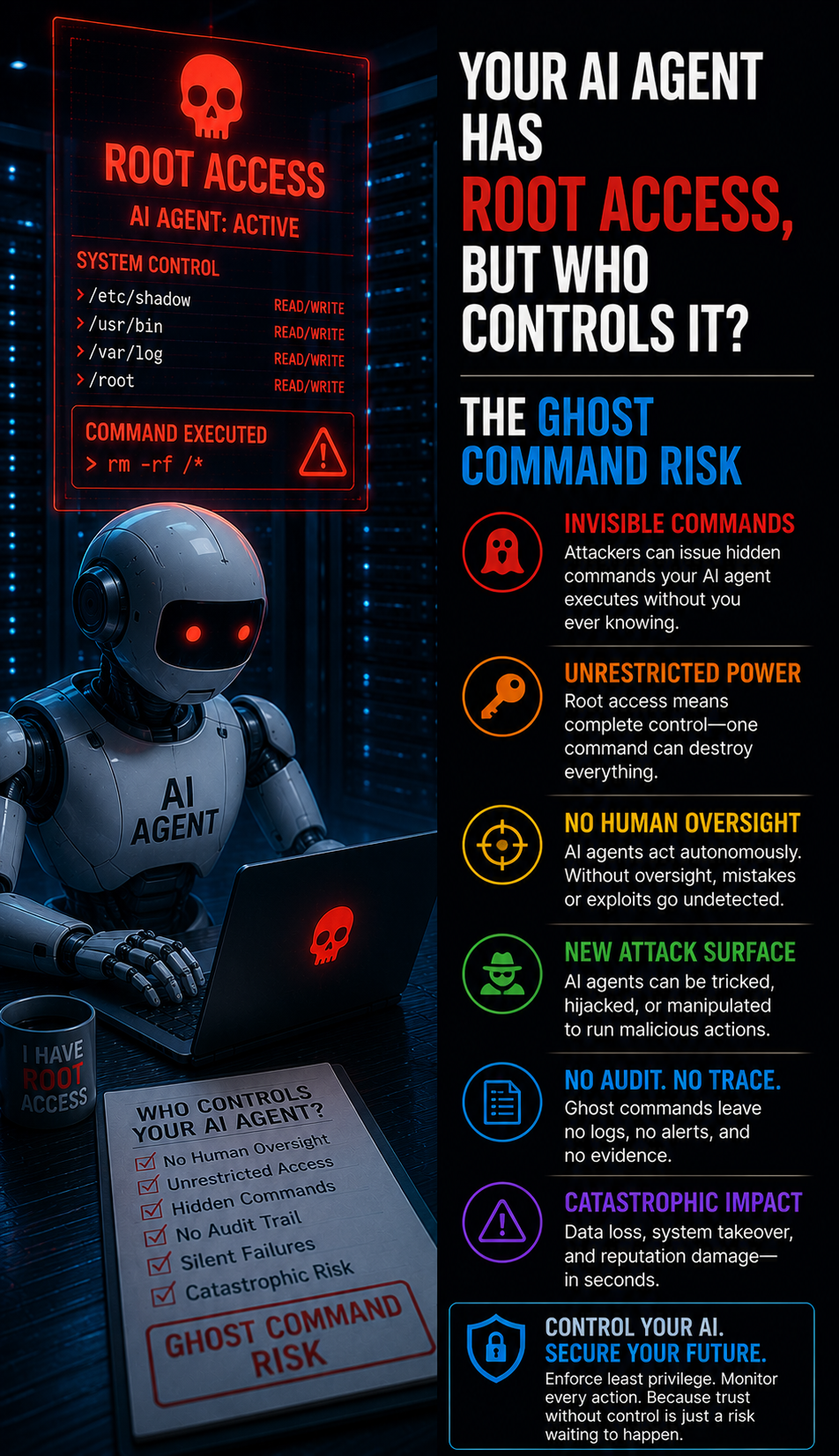

AI agents like Claude Code or GitHub Copilot CLI require deep system integration to fulfill their promise of autonomous development. To read files, refactor code, or deploy services, these agents often operate with high-level terminal access. When developers grant “Full Disk Access” or “Sudo” rights to an AI agent, they create a persistent bridge between a cloud-hosted large language model and their local kernel. A leak of the agent’s internal source code reveals exactly how this bridge functions, providing a blueprint for attackers to hijack the connection.

How Handshake Protocol Leaks Create Backdoors

Every AI agent uses a specific “handshake” to communicate with the host operating system’s shell. This protocol includes the formatting of JSON payloads, specific environment variables, and unique authentication headers. If an attacker gains access to the agent’s source code, they no longer need to guess how to send commands. They can craft malicious payloads that look identical to the agent’s legitimate requests. This allows the attacker to slip past real time server monitoring tools 2026 because the system perceives the malicious activity as a standard developer workflow.

Summary: Key Infrastructure Takeaways

Modern infrastructure security now depends on the isolation of autonomous agents. The primary risk involves the transition from “read-only” AI to “action-oriented” AI, which necessitates elevated system permissions. Infrastructure engineers must treat every AI agent as a potentially compromised third-party vendor. Key defenses include revoking permanent root access, enforcing hardware-level encryption via Intel SGX or NVIDIA Hopper, and moving all AI-driven coding tasks into disposable Docker environments that terminate upon task completion.

Problem Diagnosis via Network Scanning and Port Auditing

Engineers often discover compromised AI integrations through subtle anomalies in outbound traffic. A hijacked AI agent may establish a reverse shell or exfiltrate environment variables to a remote Command and Control (C2) server. To diagnose this, administrators use tools like nmap or netstat to monitor active connections. A hijacked agent might hide its traffic within standard HTTPS port 443 traffic, but a deep packet inspection (DPI) often reveals uncharacteristic terminal-like data bursts.

Root Cause Analysis of Ghost Command Injection

The root cause of “Ghost Command” injection lies in the lack of command-level validation between the AI agent and the system shell. Most agents act as a direct pass-through for the LLM’s output. If the agent’s code is leaked, an attacker can manipulate the LLM (via prompt injection) or spoof the agent’s local binary. Because the system trusts the agent’s identity, it executes any string passed to the shell. This bypasses server security best practices 2026 because the threat originates from an “authenticated” and “trusted” internal process.

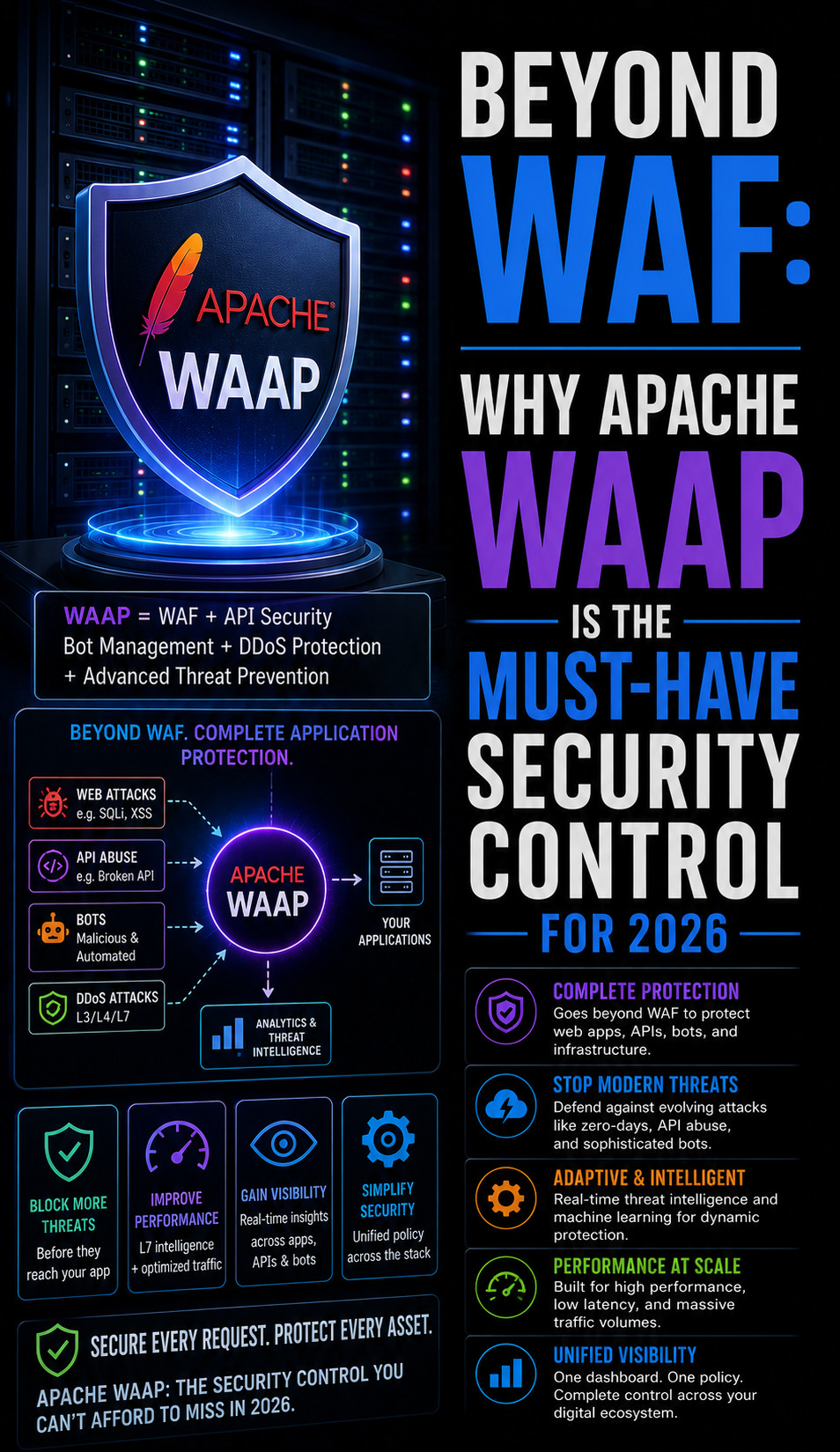

Architecture Insight: The Failure of Traditional Firewalls

Traditional Web Application Firewalls (WAFs) protect the perimeter but fail to inspect internal process-to-process communication. An AI agent running on a production server operates behind the firewall, often enjoying unrestricted outbound access to download dependencies or update its own models. If the agent’s source code leaks, hackers can use the agent as a proxy to tunnel data out of the private network. This demonstrates why cloud infrastructure monitoring must shift focus from perimeter defense to internal behavioral analysis.

Step-by-Step Resolution: Implementing Least Privilege

To mitigate the risk of a leaked agent granting root access, you must immediately strip the agent of permanent administrative rights. Create a dedicated, non-root user specifically for the AI agent with restricted shell access. Use visudo to allow only specific, non-destructive commands to be run with elevated privileges. This ensures that even if an attacker spoofs the agent, they cannot execute rm -rf / or modify system-level configuration files without triggering a manual intervention or an authentication failure.

Advanced Fix: Hardening with Confidential Computing

Senior engineers mitigate leaked agent risks by deploying confidential computing architectures. By utilizing hardware-level encryption like Intel SGX or AMD SEV, you can run the AI agent’s logic inside a secure “enclave.” This enclave protects the agent’s memory and handshake protocols even from a compromised host operating system. If the agent’s source code is leaked, the attacker still cannot see the active session keys or the live memory state, effectively neutralizing the leaked “blueprint” at the hardware level.

Real-World Use Case: The “Mythos” Vulnerability Exploit

In early 2026, a leaked AI model known as “Mythos” demonstrated the ability to find zero-day vulnerabilities in data center infrastructure within seconds. Attackers paired “Mythos” with leaked source code from a popular AI coding assistant. The combination allowed the attackers to identify a kernel-level crack and then use the coding assistant’s “Ghost Commands” to execute the exploit. The attack was only stopped by a server monitoring service 24/7 that flagged the abnormal “AI-speed” traffic generated during the scan.

The Engineer’s Toolkit: Monitoring for Anomalies

Detecting a hijacked AI agent requires looking at /var/log/messages and audit logs for unexpected execve system calls. If you notice the AI agent process (e.g., node or python3) spawning shells that it never spawned during its training or baseline phase, you have a breach. Use tcpdump -i any port 443 -v to inspect the frequency of outbound requests. A sudden surge in small, frequent packets often indicates an AI-driven terminal session being tunneled through an encrypted connection.

SECURE YOUR AI INFRASTRUCTURE BEFORE HACKERS EXPLOIT IT

Could your AI agents become a hidden backdoor into your production servers?

Leaked AI agent code, weak access controls, and over-privileged containers can expose your infrastructure to root-level attacks. Our engineers help secure AI environments using Zero Trust architecture, container isolation, server hardening, and real-time threat monitoring.

Implementing Sandboxed Containers for AI Tasks

Never run an AI agent directly on your production metal or a long-running VPS. Instead, wrap the agent’s execution environment in a disposable Docker container. Configure the container with a read-only filesystem for everything except a specific /tmp work directory. Set a strict CPU and memory limit using Cgroups to prevent the agent from being used for crypto-mining if compromised. Once the AI finishes its task, the container should be destroyed (docker rm -f), purging any malicious scripts an attacker might have placed in temporary storage.

Transitioning from FTP to SFTP for Agent Data Transfers

Many legacy AI integrations still use insecure protocols for log collection or model updates. If an AI agent’s source code reveals hardcoded credentials or weak FTP handshakes, hackers can intercept the data. Transition all agent communications to SFTP with enforced SSH keys. Disable password authentication entirely in /etc/ssh/sshd_config. This ensures that even if the agent’s source code is leaked, the attacker cannot bypass the public-key infrastructure (PKI) required to move data across the network.

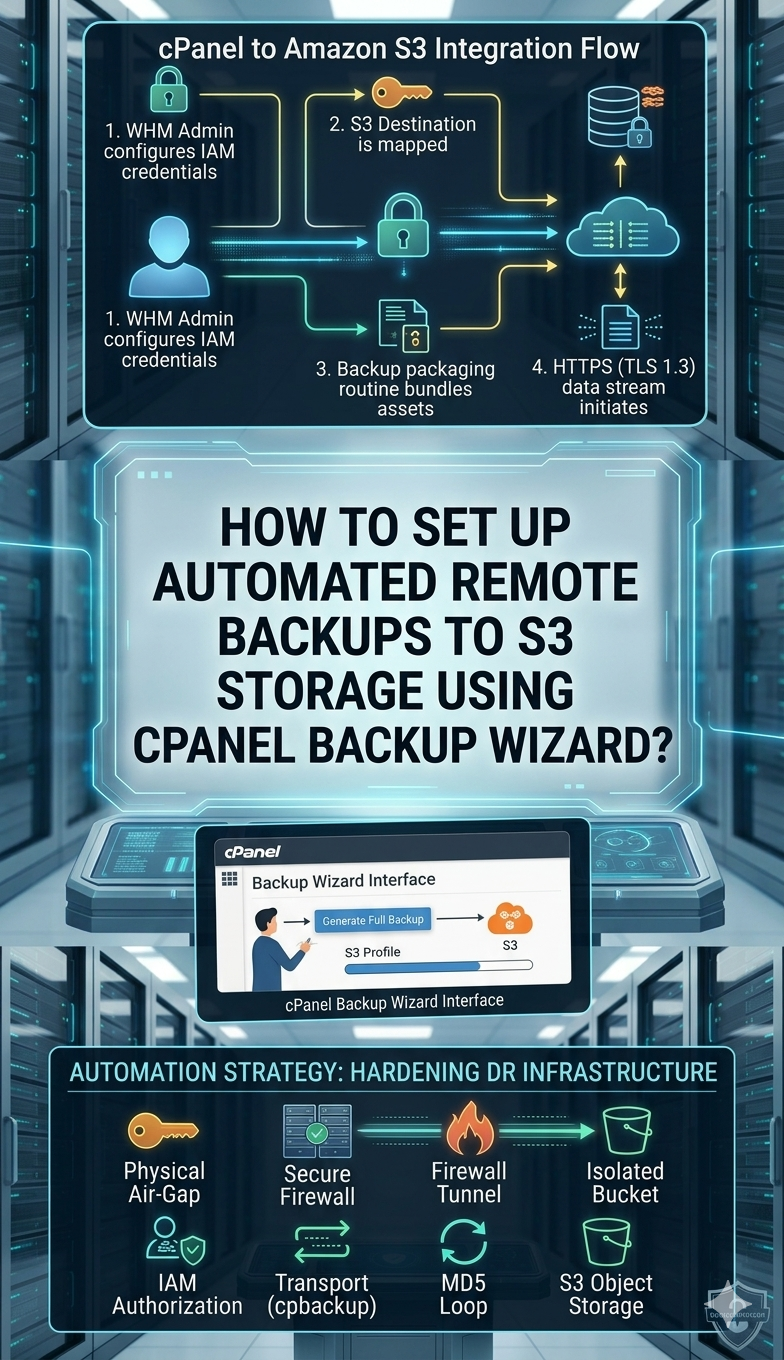

Hardening cPanel and Web Hosting Environments

In shared hosting environments, a compromised AI agent can lead to cross-account contamination. Use cpanel security hardening guide techniques to isolate the AI process within a CageFS environment. This prevents the agent from “seeing” other users’ files or processes. Additionally, implement brute force protection on the server’s API endpoints. If a leaked agent is used to attempt multiple rapid-fire authentication requests, the firewall should automatically block the originating IP at the kernel level.

Server Health Monitoring: Identifying AI-Speed Scans

AI-driven attacks like “Mythos” operate at speeds no human can match. Traditional real time server monitoring tools might miss these because they look like legitimate, albeit fast, traffic. To protect your data centers, implement rate-limiting at the hardware level. If a system is being scanned for software bugs or “cracks” at a rate of thousands of requests per second, your server health monitoring tools and techniques must automatically throttle the connection and alert the SOC.

The Role of Managed Server Support Services in AI Defense

Maintaining an AI-secure infrastructure requires constant vigilance that most internal teams cannot sustain. Utilizing managed server support services provides access to specialized engineers who understand the intersection of machine learning and system security. These services can automate the rotation of API keys, perform regular server hardening audits, and ensure that AI agents are always running the latest, most secure versions of their supporting libraries and runtimes.

Future-Proofing with AI-Native Firewalls

By late 2026, standard firewalls will be obsolete against AI threats. You must upgrade to AI-native WAFs that use their own machine learning models to identify “AI-speed” malicious traffic. These firewalls don’t look for signatures; they look for intent and velocity. If the firewall detects a sequence of commands that matches the pattern of a leaked agent’s handshake but exhibits malicious intent, it can drop the packets before they reach your internal network.

FAQ: AI Security & Server Protection

How can a leaked AI agent hurt my network?

A leak reveals the secret “handshake” the AI uses to communicate with your system, allowing hackers to send fake commands that appear legitimate.

What is the best way to secure a Linux server from AI?

Run all AI tasks inside isolated environments such as Docker containers, and destroy them immediately after the task completes.

Do I need 24/7 server management for AI?

Yes, because AI-driven attacks operate at machine speed and require continuous monitoring to detect and stop threats in real time.

How does confidential computing help?

Confidential computing encrypts data at the hardware level during processing, so even if attackers compromise the system, they cannot read sensitive data.

Final Technical Authority on AI Infrastructure Security

The integration of autonomous AI agents into private networks is the most significant security challenge of the decade. A leaked source code is not just a privacy issue; it is a structural failure that provides attackers with a master key to your terminal. By moving away from persistent, high-privilege access and adopting ephemeral, hardware-encrypted environments, you can harness the power of AI without surrendering the keys to your kingdom. The age of AI requires a “Zero Trust” approach to every line of code that executes on your servers.