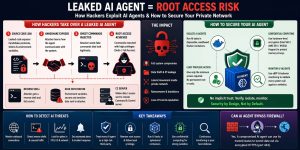

An AI agent with root access becomes a catastrophic security liability when its internal source code or handshake protocols leak. Hackers utilize these leaks to inject “Ghost Commands” malicious instructions that mimic legitimate AI activity to bypass traditional firewalls and security filters. To mitigate this risk, CTOs must enforce server security best practices 2026, including the use of hardware-enforced confidential computing, ephemeral sandboxed containers, and a strict least privilege model for all autonomous agents.

The Critical Vulnerability of Autonomous AI Agents in Production

Autonomous AI agents represent the next frontier of productivity, but their “action-oriented” nature creates an unprecedented attack surface. Unlike traditional software that follows static logic, AI agents often possess the authority to execute terminal commands, modify system files, and manage cloud resources. When a developer grants an AI agent root-level permissions to expedite DevOps tasks, they effectively install a permanent, high-privilege bridge between an external Large Language Model (LLM) and the internal kernel. If the agent’s identity or communication method is compromised, the entire private network becomes vulnerable to total takeover within seconds.

The Anatomy of the Ghost Command Exploit

The “Ghost Command” exploit occurs when an attacker reverse-engineers leaked AI agent code to spoof its communication handshake. Each AI agent uses a specific protocol to interact with the operating system. This includes JSON structures, authentication headers, and environment variables. By mimicking these patterns, attackers send commands that appear legitimate. The server treats them as trusted AI activity. As a result, it executes the attacker’s instructions without triggering alerts. This allows silent data exfiltration or privilege escalation.

Key Infrastructure

Infrastructure resilience in 2026 relies on the complete isolation of autonomous processes. Every AI agent must be treated as an untrusted third-party entity, regardless of its origin. CTOs should prioritize the revocation of all permanent administrative tokens and replace them with short-lived, task-specific credentials. Moving AI workloads into disposable environments ensures that even a successful exploit only grants access to a temporary “room” that disappears upon task completion. This shift from perimeter-based security to process-level isolation is mandatory for modern cloud infrastructure management services.

Problem Diagnosis via Behavioral Anomaly Detection

Detecting a hijacked AI agent requires moving beyond signature-based scanning to behavioral analysis. Engineers use tcpdump and nmap to monitor the traffic patterns of AI-related processes. A compromised agent typically exhibits uncharacteristic bursts of outbound data on port 443 or attempts to scan internal subnets. If netstat -tulnp reveals a process associated with an AI agent listening on unexpected ports, it indicates the establishment of a reverse shell. Identifying these “cracks” in the infrastructure is a core component of real time server monitoring tools 2026.

Root Cause Analysis: The Trust-Based Authentication Gap

The root cause of the Ghost Command vulnerability is the “implicit trust” model used in agent-to-OS communication. Most agents do not re-validate their identity for every command they execute; instead, they rely on an initial session handshake. This lack of per-command authentication allows an attacker to “piggyback” on an active session. At the protocol level, this mirrors a Man-in-the-Middle (MITM) attack, but the “middleman” is the hijacked AI agent itself. Without a zero-trust architecture at the system-call level, the operating system remains blind to the malicious intent of authenticated processes.

Architecture Insight: Why Perimeter Firewalls Fail AI Agents

Traditional Web Application Firewalls (WAFs) stop external threats from entering the network, but they cannot block threats that originate from a trusted internal process. An AI agent running on a production server operates inside the firewall, giving it a privileged vantage point. If a hacker hijacks the agent using leaked source code, they can use it as a proxy to crawl the local network. This internal movement bypasses edge security, proving that cloud infrastructure monitoring must evolve to include eBPF-based system call tracking to detect unauthorized execve calls.

Step-by-Step Resolution: Stripping Administrative Privileges

The first step to neutralizing a hijacked agent is the immediate enforcement of the least privilege model. Administrators must create a dedicated, non-privileged system user for each AI agent. This user should only have access to a specific “jail” directory and be forbidden from executing sensitive binaries like wget, curl, or sudo. By using visudo to allowlist only the specific, harmless commands the agent actually needs, you ensure that a Ghost Command attempting to install a rootkit will fail with a “Permission Denied” error at the kernel level.

Advanced Fix: Hardware-Level Isolation via Confidential Computing

To provide an absolute defense, senior engineers deploy AI agents within confidential computing enclaves. Technologies like Intel SGX or NVIDIA Hopper architecture provide a “Trusted Execution Environment” (TEE) that encrypts the agent’s memory and code at the hardware level. Even if an attacker gains root access to the host OS, they cannot inspect or modify the data inside the enclave. This ensures that the agent’s secret handshake protocols and authentication tokens remain hidden, making it impossible for a hacker to craft a functional Ghost Command even with leaked source code.

The Engineer’s Toolkit: Commands for Threat Hunting

Engineers must proactively hunt for signs of hijacked agents using standard Linux diagnostics. Use iotop -o to monitor for unusual disk activity that might signal the encryption phase of a ransomware attack initiated by an agent. Check /var/log/messages or /var/log/audit/audit.log for any USER_AUTH events associated with the agent’s service account. If you see rapid, repetitive authentication attempts, use iptables -A INPUT -s [attacker_ip] -j DROP to sever the connection while you rotate the agent’s API keys and session secrets.

Real-World Use Case: The “Mythos” Model Data Center Breach

In early 2026, attackers breached a high-profile data center by pairing a leaked AI model called “Mythos” designed to find software bugs with a hijacked developer AI agent. They used the agent’s root access to deploy Mythos internally, scanning 5,000 servers for zero-day vulnerabilities in under 60 seconds. The attack succeeded because the firm relied on traditional server health monitoring tools that failed to detect AI-speed lateral movement. Only organizations using managed server support services with AI-native WAFs successfully blocked the scan.

Implementing Ephemeral Sandboxed Containers

A production-ready AI strategy requires running every agent inside a disposable Docker or Podman container. These containers should be configured with a read-only root filesystem and no network access unless explicitly required for the task. Use Cgroups to limit the agent’s CPU and memory usage to prevent a hijacked agent from being used for cryptojacking. By setting the container to auto-delete (--rm) upon completion, you ensure that any malicious artifacts or backdoors planted by an attacker are wiped from existence before they can be executed.

Hardening the cPanel and Web Hosting Environment

For businesses using cPanel or Plesk, AI agents must be isolated within a CageFS or LVE (Lightweight Virtualized Environment). This prevents “cross-account” contamination where a hijacked agent in one hosting account attempts to read the configuration files of another. Implementing cpanel security hardening guide standards, such as disabling mod_userdir and enabling Shell Fork Bomb protection, adds a layer of defense against agents being used to launch Denial of Service (DoS) attacks from within the local infrastructure.

Server Monitoring Services 24/7: The Human-AI Hybrid Defense

While AI tools move at light speed, the human element remains the final arbiter of security. Server monitoring services 24/7 provide a critical layer of oversight that catches the subtle signs of “Ghost Command” activity. Professional SOC analysts look for “impossible travel” patterns in API logs and correlate server metrics with network traffic. If apache server high cpu usage fix requests aren’t working, it may be because an agent is hiding a mining script behind a web server process a nuance that only human-led remote server management services can reliably identify.

Future-Proofing with AI-Native Firewalls and WAFs

By late 2026, the industry will shift entirely toward AI-native firewalls that understand the “intent” of a command sequence. These systems don’t just block unauthorized IPs; they analyze the semantics of the instructions being sent to the server. If an AI agent suddenly requests to change the sshd_config or add a new SSH key to the authorized_keys file, the AI-native WAF identifies this as a high-risk deviation from the baseline and automatically suspends the agent’s session until a human administrator approves the action.

The Dangers of “Too Many Connections” and Agent Overload

In many cases, a hijacked or poorly configured AI agent can trigger a how to fix mysql too many connections error by attempting to scrape or modify a database at an unsustainable rate. This often looks like a standard performance issue, but it can be a “smoke screen” for a deeper attack. When why server is slow after some time queries arise, engineers must check the connection table using show processlist; in MySQL to see if an AI agent service account is hoarding resources, which is a common precursor to an injection attack.

Securing Linux Servers from Hackers: The Zero-Trust Checklist

To how to secure linux server from hackers in the age of AI, every organization must adopt a zero-trust checklist. This includes enforcing MFA for all API access, disabling root SSH logins, and using AIDE (Advanced Intrusion Detection Environment) to monitor for unauthorized changes to system binaries. Conduct regular server security best practices 2026 audits that include a “Red Team” exercise, where you task an AI model with finding ways to hijack your organization’s own agents, ensuring your defense stays one step ahead of threats.

FAQ: Securing Your Private Network from AI Threats

Who has access to my AI agent?

If your agent’s source code or API keys leak, anyone can take control of it. You must use hardware security modules (HSMs) to store sensitive keys.

How do I stop Ghost Commands?

Remove implicit trust. Use eBPF monitoring to validate every system call the AI agent makes against a strict allowlist.

Why is my server slow when the AI agent is running?

High CPU usage, often from Apache, can slow the server. The agent may be performing recursive file scans or running unauthorized background tasks.

What is the best way to monitor AI agents?

Use server health monitoring tools and techniques that track process-level behavior and network patterns, not just CPU and RAM usage.

Can an AI agent bypass my firewall?

Yes, if it runs inside your network, it can use its trusted status to tunnel data through encrypted HTTPS (port 443).

Struggling with Traffic Spikes and Downtime?

Partner with our experts for reliable cloud auto-scaling, proactive monitoring, and high-availability infrastructure solutions.

Authoritative Conclusion on the AI Security Paradigm

The rise of autonomous agents has fundamentally changed the risk profile of cloud infrastructure. A leaked AI agent is no longer a minor data leak; it is a structural vulnerability that can grant root access via the Ghost Command exploit. CTOs must move beyond the “convenience” of high-privilege AI and adopt a rigorous, isolation-centric architecture. By leveraging linux server management services that prioritize server hardening and confidential computing, businesses can embrace the AI revolution without leaving the front door to their private network wide open.