Summary:

Cloud infrastructure management works by utilizing a centralized software layer to orchestrate, monitor, and secure virtualized resources including compute, storage, and networking. This process abstracts physical hardware through a hypervisor, allowing engineers to deploy and scale environments via Infrastructure as Code (IaC) and automated APIs. By integrating real time server monitoring tools 2026, organizations ensure high availability, cost-efficiency, and proactive threat mitigation across multi-cloud and hybrid ecosystems.

To master how does cloud infrastructure management work, architects must prioritize four pillars: automated orchestration, continuous monitoring, security hardening, and cost optimization. Modern frameworks utilize aws server management services and Kubernetes to handle containerized workloads with self-healing capabilities. Engineers must transition from manual configurations to declarative models to eliminate human error and maintain environment parity. Utilizing managed server support services allows teams to offload the complexity of hypervisor maintenance and hardware lifecycles. Finally, always verify the connectivity of your management plane using nmap or telnet to prevent administrative lockouts.

Defining the Impact of Modern Infrastructure Management

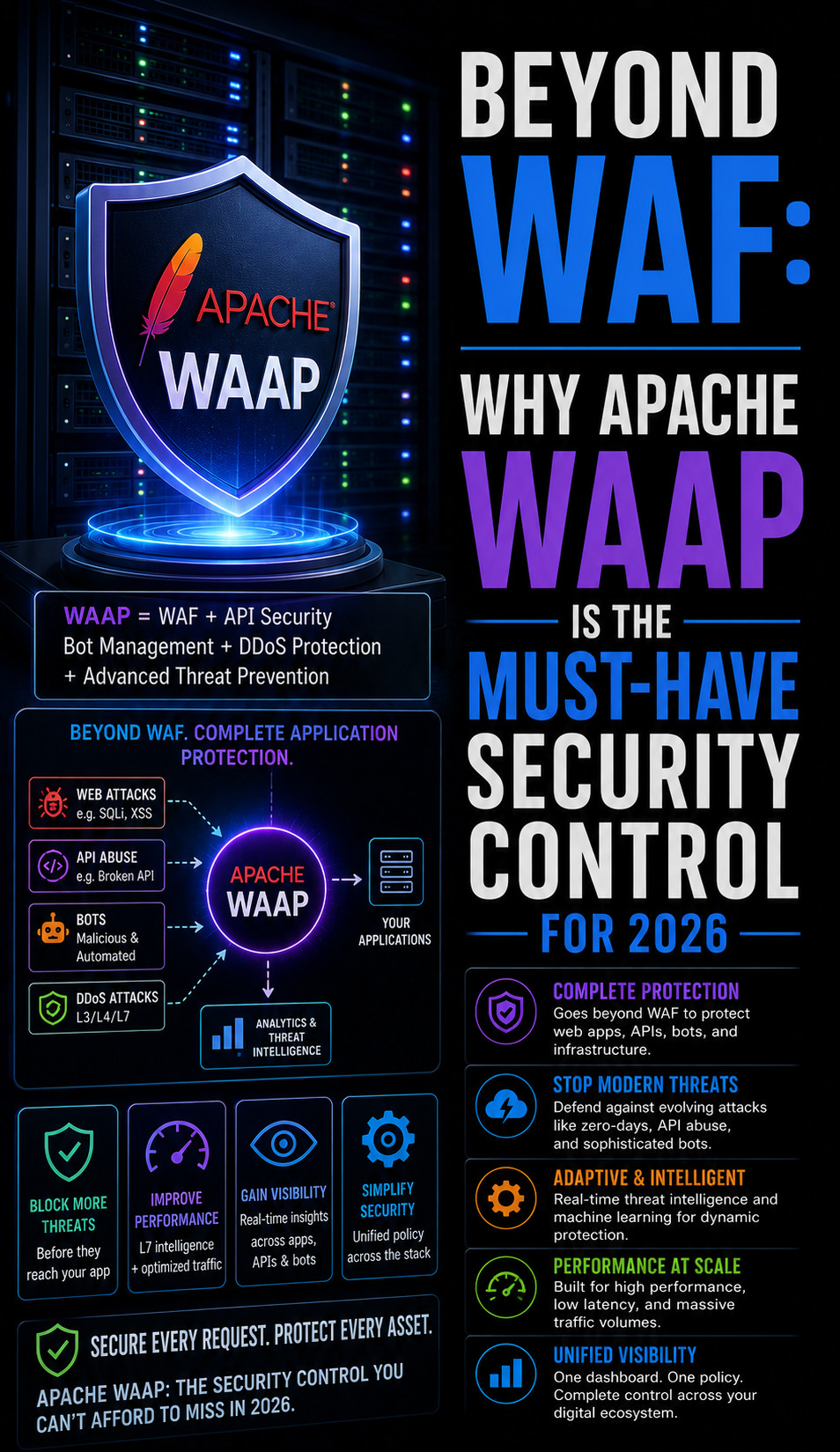

Inefficient infrastructure management directly leads to production outages and spiraling operational costs. When an organization lacks a cohesive strategy for cloud infrastructure management services, they face “cloud sprawl” and security vulnerabilities. As a Lead Technical Architect, I classify management as the operating system of the data center. It dictates how the system responds to a sudden apache server high cpu usage fix or a volumetric DDoS attack. Without robust server monitoring services 24/7, your infrastructure remains a black box that only reveals its flaws during a catastrophic failure.

Problem Statement: The Complexity of Distributed Systems

The primary challenge in modern cloud environments is the sheer volume of ephemeral resources. Traditional linux server management services relied on static IP addresses and long-lived physical servers. Today, instances spin up and down in seconds, making manual tracking impossible. This volatility often causes website down troubleshooting steps to become complicated by “phantom” resources or stale DNS records. Organizations struggle to maintain visibility, leading to unpatched vulnerabilities and orphaned storage volumes that drain budgets without providing value.

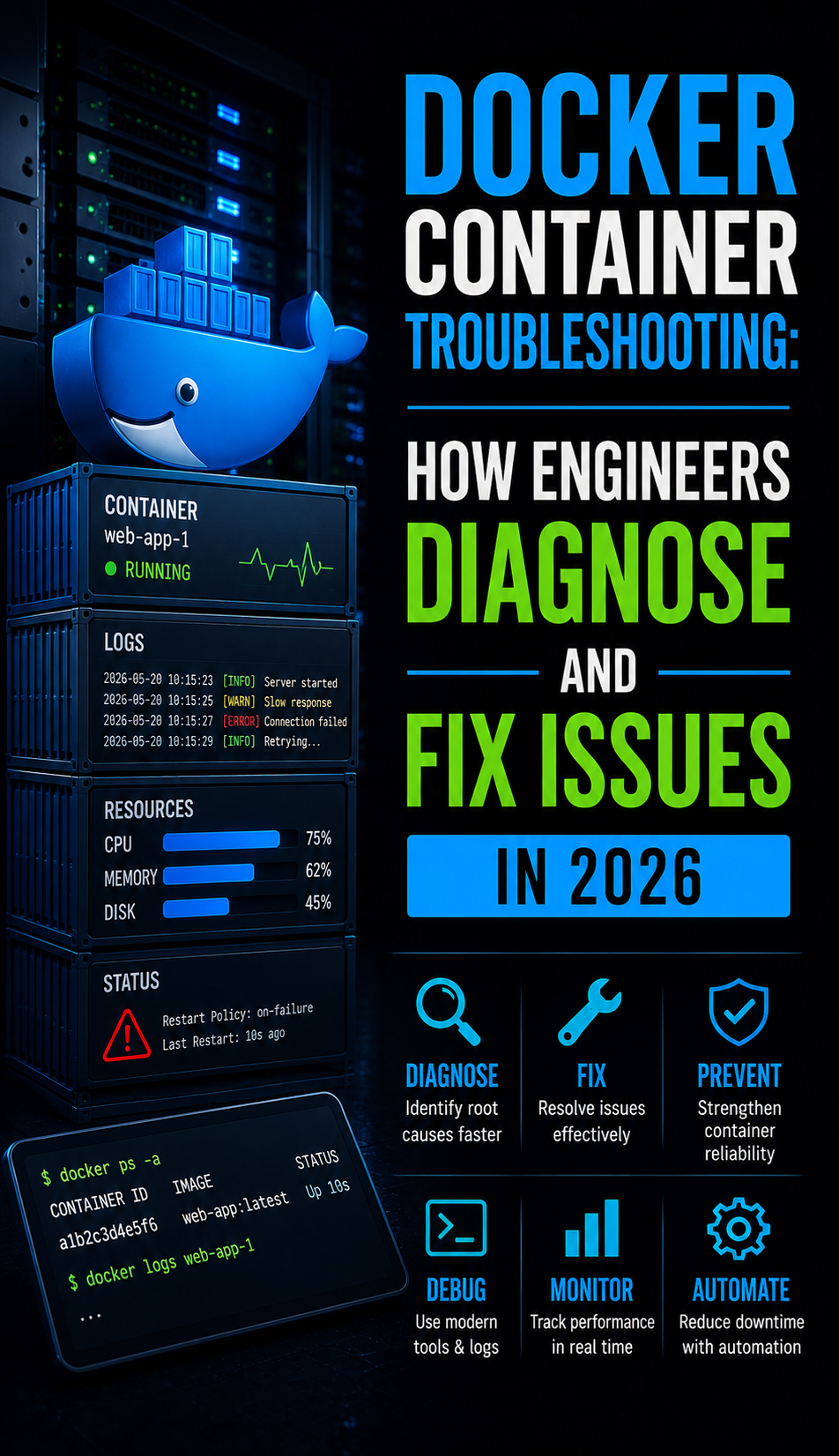

Root Cause Analysis: Why Infrastructure Management Fails

Failures in cloud management usually stem from a lack of “State” synchronization between the management plane and the actual data plane. For example, a how to fix mysql too many connections error might occur because the auto-scaler failed to detect database pressure. At a protocol level, these failures often manifest as ECONNREFUSED errors when a load balancer attempts to route traffic to a non-existent or uninitialized node. Kernel-level limits on the host machine, such as fs.file-max or net.ipv4.ip_local_port_range, can also cause management agents to crash, leaving the infrastructure unmonitored and vulnerable.

Solution Phase 1: Implementing Infrastructure as Code (IaC)

The first step in a professional how does cloud infrastructure management work workflow is adopting IaC tools like Terraform or Pulumi. This allows you to define your entire network topology, including VPCs, subnets, and security groups, in version-controlled text files. By treating infrastructure as software, you ensure that every deployment is identical and reproducible. This eliminates the “it works on my machine” syndrome and provides a clear audit trail for server security best practices 2026. IaC also simplifies the deployment of white label server support environments for resellers and multi-tenant platforms.

Solution Phase 2: Centralizing Visibility with Monitoring

Effective cloud infrastructure monitoring best practices require a “Single Pane of Glass” view. You must integrate real time server monitoring tools 2026 like Prometheus and Grafana to track metrics across all provider regions. These tools collect telemetry data via agents installed on each instance or through native cloud APIs. Monitoring must extend beyond simple CPU/RAM usage to include “I/O Wait,” “Disk Latency,” and “Network Throughput.” High-resolution monitoring allows engineers to identify why server is slow after some time by correlating performance dips with specific deployment timestamps or scheduled tasks.

Solution Phase 3: Automated Scaling and Self-Healing

A core benefit of how does cloud infrastructure management work is the ability to automate responses to resource pressure. Configure “Auto-Scaling Groups” that trigger based on CPU or memory thresholds. When a node experiences apache server high cpu usage, the management layer automatically provisions a new instance and registers it with the load balancer. Simultaneously, self-healing protocols should detect “Unhealthy” nodes and terminate them. This ensures that a local failure, such as a how to fix disk space 100% linux server error on a single node, does not escalate into a global outage.

Advanced Fix: Tuning Kernel Parameters for High-Concurrency

Senior engineers must look beyond the GUI and tune the underlying Linux kernel for the management plane to function under load. Use sysctl -w net.core.somaxconn=1024 to increase the number of queued connections the kernel can handle. In a cpanel server management context, you must also monitor the entropy pool via /proc/sys/kernel/random/entropy_avail. If entropy is low, SSL/TLS handshakes will stall, causing a spike in CPU load. Implementing an entropy daemon like haveged is an “engineer-level” fix that ensures the remote server management services remain responsive during high-traffic periods.

Essential Toolkit: Commands for Cloud Diagnostics

Architects must master a specific command set to verify the state of their cloud resources. Use nmap -p 22,80,443 [Instance_IP] to check if your security groups are correctly permitting traffic. Execute tcpdump -nnvvS to inspect packet headers for dropped connections or fragmented packets. If you suspect an ECONNREFUSED issue, use netstat -an | grep LISTEN to confirm the service daemon is actually bound to the expected port. These tools are the backbone of remote server management services, providing the granular data needed to debug complex network paths through virtual gateways.

Architecture Insight: Active vs. Passive Management Planes

Management planes can operate in either “Active” or “Passive” modes. Active management agents (like those used in aws server management services) constantly push heartbeats and telemetry data to a central controller. Passive management relies on the controller periodically “polling” the nodes. In a high-security server hardening scenario, passive polling is often preferred as it minimizes the number of outgoing connections from the production nodes. However, active agents provide faster detection for website down troubleshooting steps, making them the standard for real-time, mission-critical infrastructure.

Real-World Use Case: Resolving Passive Port Blocks in the Cloud

A client recently moved their cPanel environment to a cloud provider and immediately lost FTP access. Despite the service running, users received “MLSD” time-out errors. The root cause was the cloud security group blocking the high-numbered passive port range. By aligning the PassivePortRange in the FTP configuration with the cloud’s Inbound Rules (e.g., ports 49152-65534), we restored connectivity. This demonstrates that how does cloud infrastructure management work depends heavily on synchronizing local software settings with the provider’s network access control lists (ACLs).

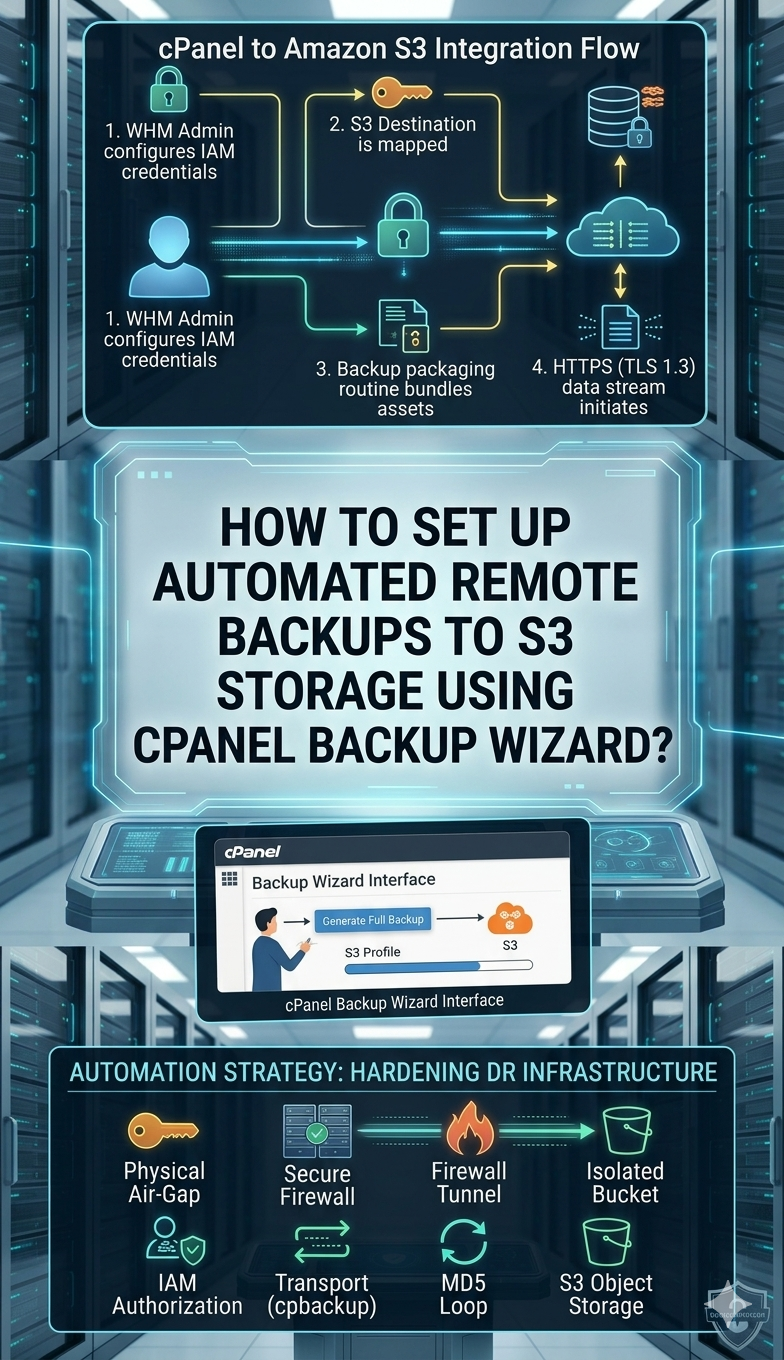

Hardening the Management Interface

Security is the most critical component of how does cloud infrastructure management work. You must protect the management console and APIs with Multi-Factor Authentication (MFA) and IP adding. Following the cpanel security hardening guide, engineers should disable password-based logins and move to SSH Key authentication for all linux server management services. Additionally, implement “Identity and Access Management” (IAM) roles with the “Principle of Least Privilege.” This ensures that a compromised service account cannot delete your entire production cluster.

Benefits of Outsourcing Cloud Infrastructure Management

Many businesses realize the benefits of outsourcing technical support when they face the complexity of multi-cloud environments. An outsourced server management company provides the expertise to manage AWS, Azure, and Google Cloud under a unified framework. This allows internal teams to focus on application development while the outsourced hosting support services handle the 24/7 task of kernel patching and security monitoring. Professional managed server support services ensure your infrastructure remains compliant with evolving industry standards.

Why Businesses Need Server Monitoring for Cloud ROI

Organizations often ask why businesses need server monitoring when the cloud is supposedly “managed” by the provider. The provider only manages the hardware; you are responsible for the performance of the OS and applications. Without server monitoring services 24/7, you cannot identify “zombie” instances or over-provisioned resources that inflate your bill. Monitoring provides the data needed for “Rightsizing,” which is the process of matching instance types to their actual workload. This is a key part of cloud infrastructure monitoring best practices.

Future-Proofing with Server Security Best Practices 2026

In 2026, the management layer must include AI-driven anomaly detection. Server security best practices 2026 involve using machine learning to distinguish between a legitimate traffic spike and a sophisticated botnet attack. Proactive server hardening now includes “Immutable Infrastructure,” where servers are never patched in place; instead, they are replaced with new, updated images. This ensures that no unauthorized changes can persist in your environment, providing a much higher level of protection on how to secure linux server from hackers.

Step-by-Step Resolution: FTP Troubleshooting in Cloud Environments

If your cloud-based FTP fails, follow this protocol. First, verify the listener using telnet [IP] 21. If it connects, the command channel is open. Second, check the PassivePortRange in your server configuration (e.g., Pure-FTPd or ProFTPD). Third, ensure your cloud security group and local CSF firewall permit traffic on that specific range. Finally, use a client like FileZilla with Level 3 debugging enabled to identify if the error is a “TLS Handshake” failure or a “Data Socket” timeout. This methodical approach is the hallmark of 24/7 server management services.

Understanding What is Server Management Services

When clients ask what is server management services, we explain it as the complete lifecycle management of their digital assets. It encompasses everything from the initial server hardening to the ongoing real time server monitoring tools 2026 updates. It is the bridge between raw hardware and a functional, secure application. By providing white label server support, we enable agencies to offer these high-level architectural services to their own clients without the need for a massive internal engineering department.

Streamline Your Cloud Infrastructure for 2026

Eliminate operational bottlenecks with our 2026 Engineering Framework. From IaC automation to proactive L3 monitoring, we ensure your cloud environment is secure, scalable, and cost-optimized.

- • Automated Orchestration

- • 24/7 Proactive Monitoring

- • Zero-Trust Hardening

- • Multi-Cloud Expertise

Conclusion:

Mastering how does cloud infrastructure management work is a prerequisite for any organization scaling in the 2026 digital economy. By moving away from manual, reactive administration and embracing automated, data-driven orchestration, you can guarantee 99.9% uptime and optimal ROI. Whether you are performing an apache server high cpu usage fix or deploying a global multi-cloud cluster, the principles of IaC, continuous monitoring, and server hardening remain the gold standard.

FAQ: Cloud Management Essentials

How does cloud infrastructure management work for beginners?

It works by using a web dashboard or command-line tools to control virtual servers and networks. Instead of touching physical cables, you use software to tell the cloud provider what resources you need, and they provide them instantly.

What is the best way to monitor linux server performance in the cloud?

The best way is to use real time server monitoring tools 2026 like Netdata or Prometheus. These tools provide a live view of CPU, memory, and disk health, allowing you to react to issues before they cause downtime.

How can I secure my cloud server from hackers?

You should follow a cpanel security hardening guide, use strong SSH keys, enable MFA on your cloud console, and use a firewall to block all ports except those absolutely necessary for your website to function.

Why businesses need server monitoring in 2026?

Businesses need it to ensure they aren’t wasting money on unused resources and to protect against modern cyber threats. Monitoring provides the “Heartbeat” of your business’s digital presence.